Copying Without Pride: Engineering the Greatest Businesses

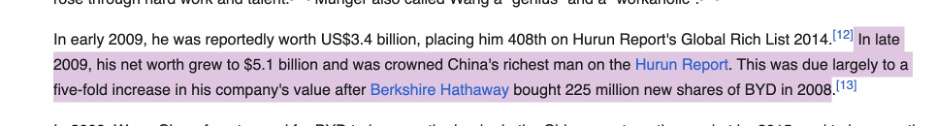

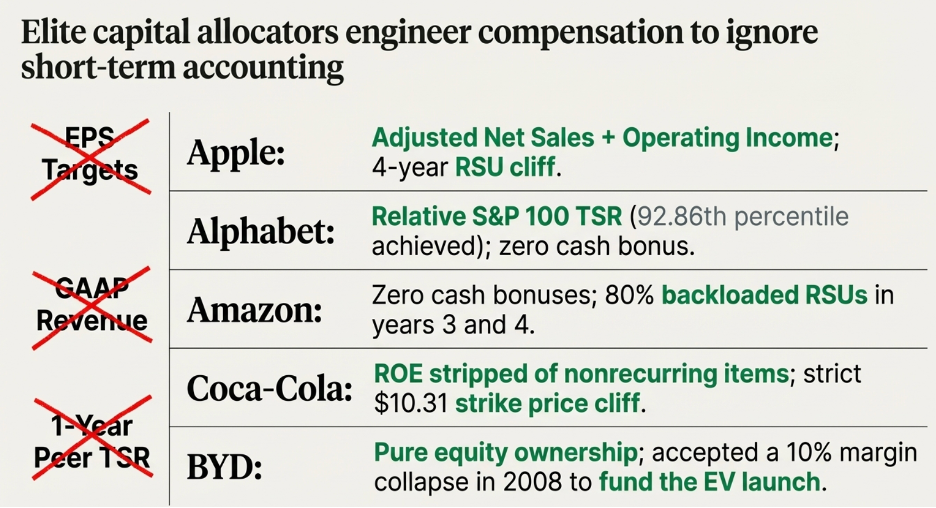

A SOYA Investing Case Study — Apple · Alphabet · Amazon · Coca-Cola · BYD

Preface

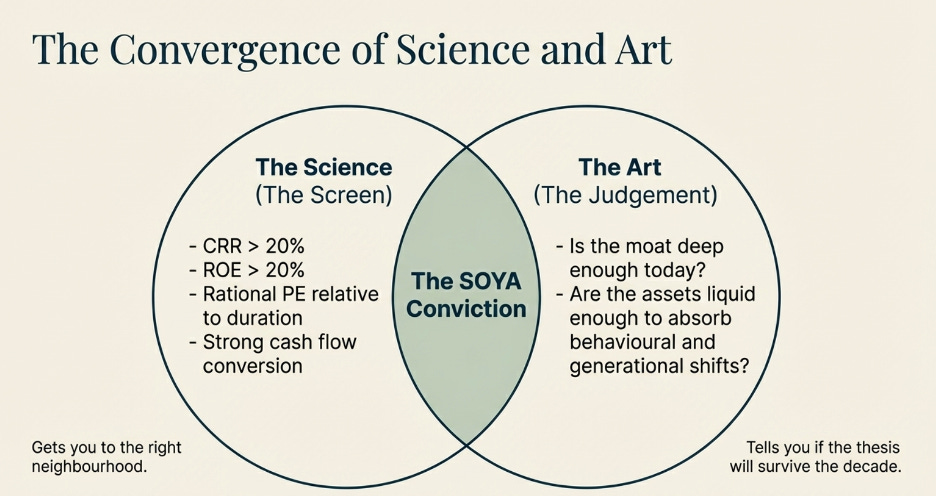

The five companies in this paper were each identified as exceptional investments years before the wider market agreed. Not through luck. Not through access to information others did not have. Through a way of reading businesses that most financial training actively discourages — one that looks past this quarter’s earnings and asks a different question entirely: how long can this business keep doing what it is doing right now?

That question sounds simple. Answering it rigorously is the work of a lifetime.

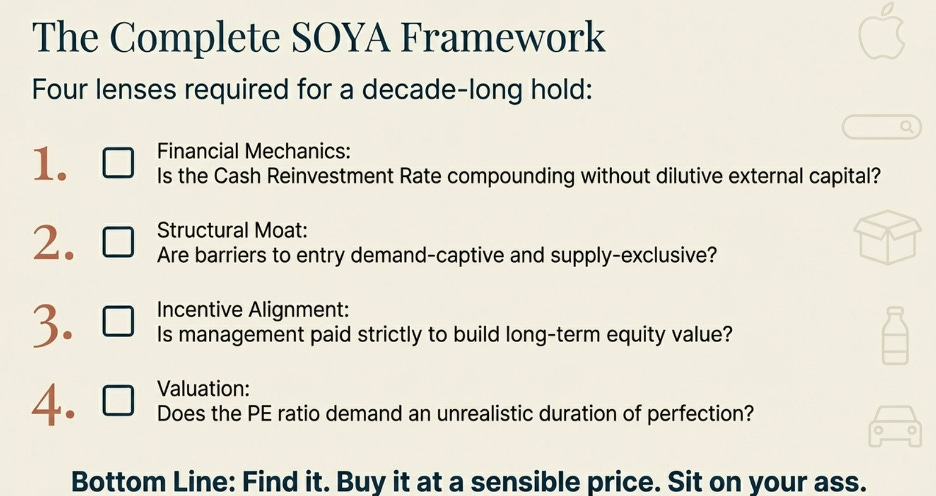

What follows is the framework behind those five investments — and behind every investment worth holding for a decade. It begins by reframing the most misused metric in public markets. It then builds, piece by piece, the tools for understanding why certain businesses are structurally difficult to compete with. And it ends with the five case studies themselves, each dissected to show exactly why they qualified — and where the limits of that qualification sit.

The discipline of holding great businesses through the noise of markets and the second-guessing of peers has a name: SOYA investing. Sit On Your Ass. Charlie Munger refined it over 50 years. The principle is that the greatest returns in equity do not come from more frequent action. They come from finding a business whose advantages will compound for a very long time, buying it before the market has fully priced how long that will be, and then having the patience to let the compounding run. The finding and the patience are both hard. Most investors fail at the patience even when they succeed at the finding.

This paper is about the finding. Specifically, it is about building the mental architecture to know — with conviction — when a business deserves your patience.

Part One: The PE Ratio Is a Question, Not an Answer

The Number Everyone Quotes and Almost Nobody Understands

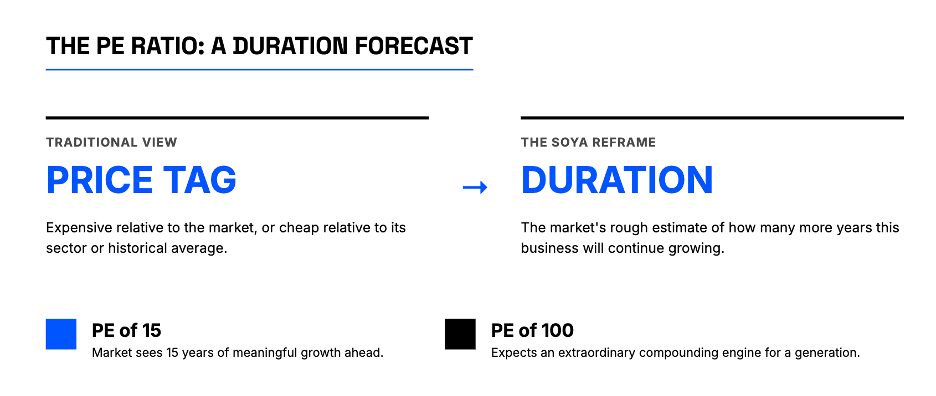

The price-to-earnings ratio is the first metric any analyst reaches for and the one most consistently misread. Ask a room full of fund managers what a PE of 30 means and the answer will come back as a comparison: expensive relative to the market, or cheap relative to its sector, or in line with its historical average. What will almost never come back is a description of what the number is actually saying about the business — what belief about the future it encodes.

Here is a cleaner way to think about it.

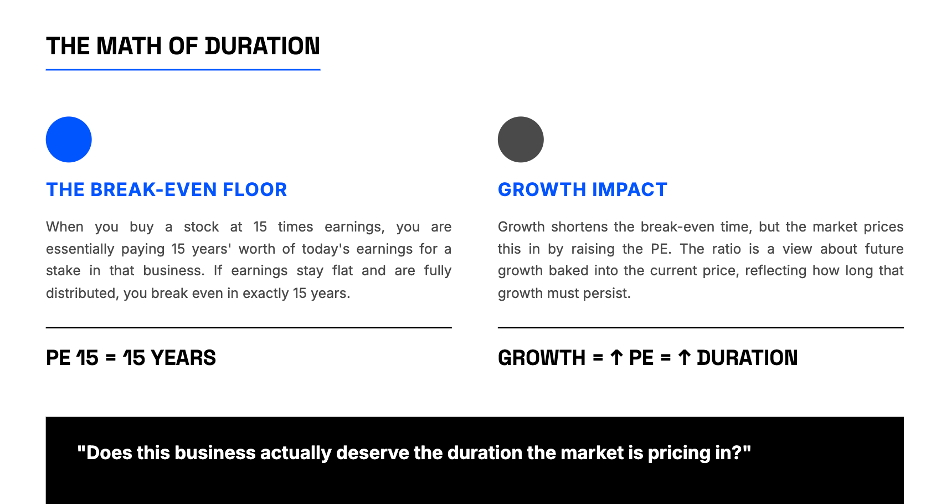

When you buy a stock at 15 times earnings, you are paying 15 years’ worth of today’s earnings for a stake in that business. If the business earns exactly the same amount every year for the next 15 years and distributes all of it, you have broken even in nominal terms. That is the floor — the case where nothing about the business changes. But of course, businesses do not stay flat. They either grow or they shrink. And the price you paid — 15 times earnings — already has a view about which direction it will go baked into it.

Think of the PE ratio this way: it is the market’s rough estimate of how many more years this business will continue growing at approximately its current rate. A PE of 15 says the market sees perhaps 15 more years of meaningful growth ahead. A PE of 40 says it sees far more — four decades worth, or growth at a rate that more than compensates for fewer years. A PE of 100 says the market expects this to be an extraordinary compounding engine for a generation, or it has completely lost its grip on rational valuation. The number is not a price tag. It is a duration forecast.

That reframe changes everything about how you use the metric. The question is no longer “is this PE high or low?” The question is: does this business actually deserve the duration the market is pricing in?

This is where the real analytical work begins, and it is what the rest of this framework is built to answer.

When Precision Matters, and When Approximation Is Enough

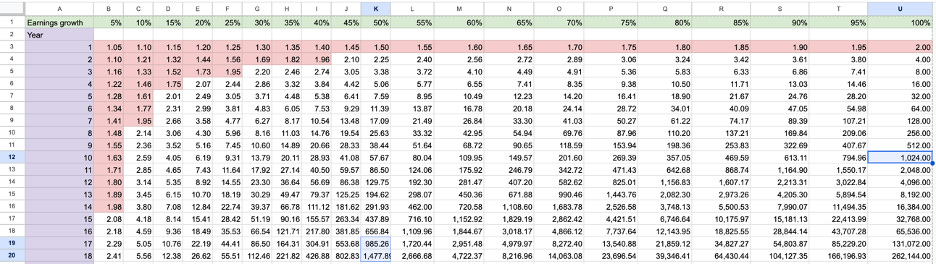

The PE-as-duration framework can be made mathematically precise, and there are situations where that precision is exactly what you need.

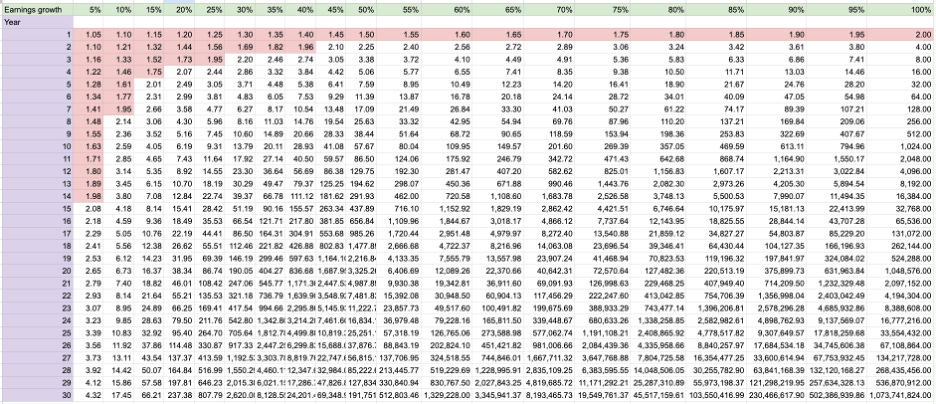

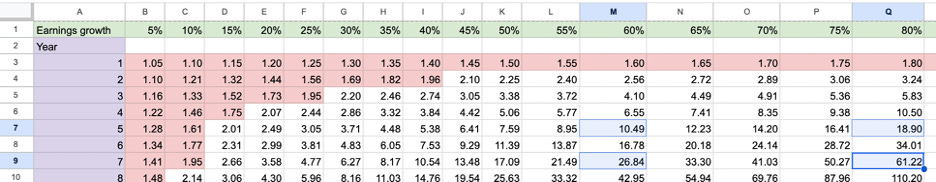

The clearest case is a business whose competitive advantage has a known expiry date. A pharmaceutical company with a patented drug, for instance, has a moat that will legally cease to exist on a specific calendar date. When that patent expires, generic manufacturers will enter the market, prices will collapse, and the earnings power that justified the current valuation will erode sharply. In that situation, you can count the years until expiry, examine the earnings growth rate, and work out with reasonable rigor whether the current PE is justified by the duration that remains. The table does what it says: it translates growth rate and years into a valuation anchor. The precision is available and it matters.

The other situation where exactness is warranted is at the high end of the growth spectrum. When a business is growing earnings at 60% or 80% per year, the difference between five years of that growth and seven years is not marginal — it is the difference between a business worth three times its current earnings base and one worth eight times. Each additional year of high-rate compounding adds so much terminal value that your duration estimate has to be reasonably precise to avoid a large valuation error in either direction. For high-growth companies, the table is a useful guard against both excessive generosity and excessive cynicism about how long the growth can run.

For most businesses, though, and for the five in this study, the PE ratio is best used as a directional lens rather than a precision instrument. The question it is helping you answer is coarser but no less important: does the duration the market is pricing into this stock match what you can see when you examine the competitive structure of the business? If the market is pricing 25 years of sustained growth into a company growing at 10% whose moat is thin and whose industry is contested, the stock is mispriced regardless of how attractive the brand feels. If the market is pricing 12 years of growth into a company with structural advantages that should last well beyond that, you may be looking at an entry point.

The framework in Part Two is what makes that assessment possible. But before building it, there is one example worth walking through in detail — not because the math resolves neatly, but because the reasoning it demands illustrates exactly what the duration frame is for

.

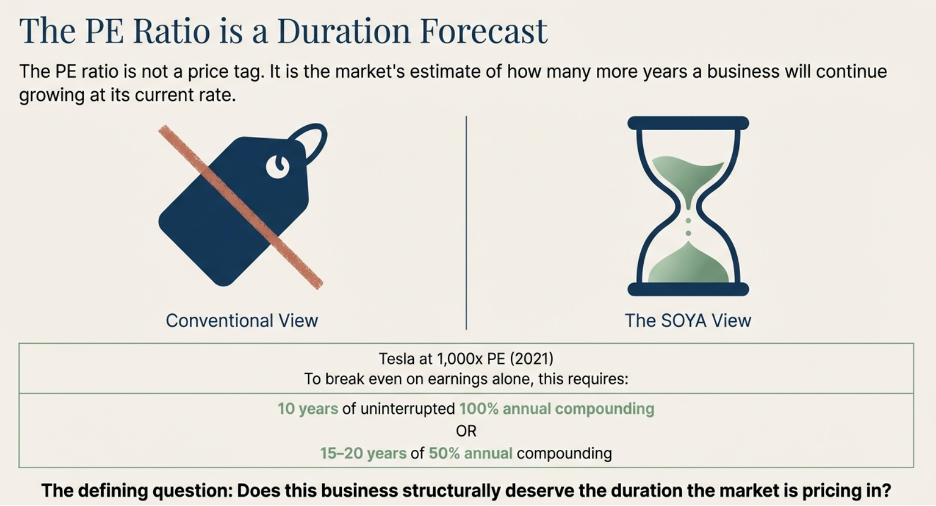

Tesla at 1,000 Times Earnings: What the Table Actually Tells You

In late 2021, Tesla’s stock briefly reached a valuation of approximately 1,000 times trailing earnings. Most commentary at the time reached for peer comparisons — Tesla versus Toyota, Tesla versus GM — to illustrate how extreme the number was. That comparison, while viscerally satisfying, misses the more important analytical question. The right frame is not “how does this compare to other car companies?” The right frame is: what does a PE of 1,000 require to be true about the business, and is any of it visible in Tesla’s actual competitive structure?

Start with the table. A PE of 1,000 at a 100% annual earnings growth rate — which would rank among the highest sustained earnings growth rates ever achieved by a large-cap company — still requires approximately 10 years of uninterrupted compounding before the investment recovers its cost from earnings alone. Ten consecutive years of doubling earnings, just to break even on the entry price. That is the floor, not the ceiling. At a more realistic and still very aggressive 50% annual earnings growth, the duration extends to somewhere between 15 and 20 years. The table is not being conservative here. It is doing arithmetic.

Now ask the obvious question: what would need to be true about Tesla’s competitive position for 10 to 20 years of extraordinary earnings growth to be achievable?

The answer is that Tesla would need to defend its position in the electric vehicle market against every serious competitor that entered for that entire period without its earnings growth rate meaningfully declining. Not just for one year, not just through one competitive cycle, but for a decade or more — across multiple generations of EV technology, multiple rounds of battery innovation, and against the full weight of every major automaker in the world re-engineering their businesses around the exact product category Tesla had pioneered.

This is where the PE of 1,000 collapses under inspection. Not because Tesla was a poor company — it was, by any reasonable measure, a genuinely impressive manufacturer that fundamentally changed the trajectory of the industry. The problem is structural: Tesla had no mechanism to protect the earnings growth rate that the PE was pricing in. This brings us to our next section: How can/ does a well-run business structurally protect their earnings growth rate (which brings up to our next section — structural advantages which protects a business earnings, and its growth).

Part Two: What Actually Protects a Business

Forget Porter. Focus on One Thing.

Michael Porter’s Five Forces framework is taught in every MBA programme in the world. It is genuinely useful for one purpose: generating a structured list of industry characteristics to discuss in a strategy meeting. As a tool for identifying whether a business has a durable competitive advantage, it is largely a distraction.

The reason is that four of the five forces are not causes — they are symptoms. A business experiencing intense competitive rivalry does so because barriers to entry are low enough that new competitors found it rational to enter. A business suffering from supplier power does so because it lacks the supply-side advantages that would give it pricing leverage over its own inputs. A business facing powerful buyers does so because it has not established the switching costs or habits that reduce those buyers’ negotiating position. The threat of substitutes is simply a restatement of whether customer captivity is strong enough to resist alternatives.

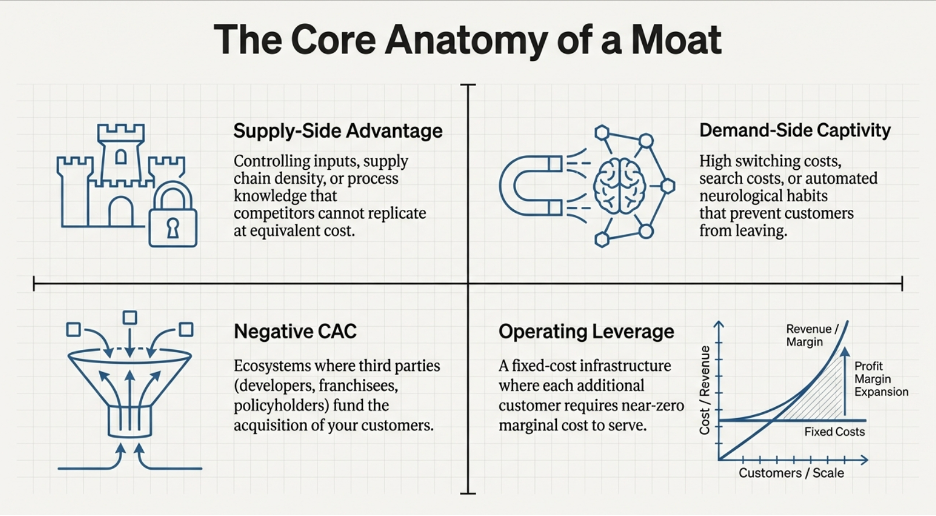

Strip away the symptoms and what remains is the one force that actually matters: barriers to entry. If barriers to entry are high enough, competitive rivalry stays manageable. Supplier power stays containable. Buyer power stays limited. The threat of substitutes stays low. Everything else in Porter’s model is a downstream consequence of this one upstream condition.

This simplification changes how you look at businesses. Instead of working through five parallel analyses, each producing its own list of considerations, you ask one question: why would a well-funded, highly capable competitor — a serious team with patient capital, strong talent, and no resource constraints — fail if they tried to enter this market today? If the honest answer is that they would gain meaningful traction within three to five years, the business has no durable moat. If the honest answer is that they would fail, and you can articulate the precise structural mechanism by which they would fail, then you have found something worth examining deeply.

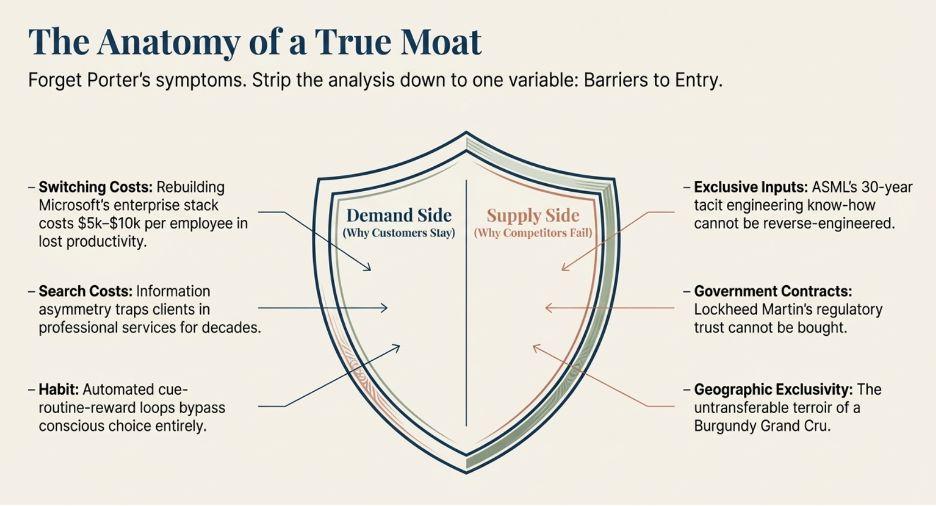

Barriers to entry come from two directions. Every business faces its customers on the demand side, and the rest of the world on the supply side. A barrier can come from either, or — in the most powerful businesses — from both simultaneously.

The Demand Side: Three Reasons Customers Stay

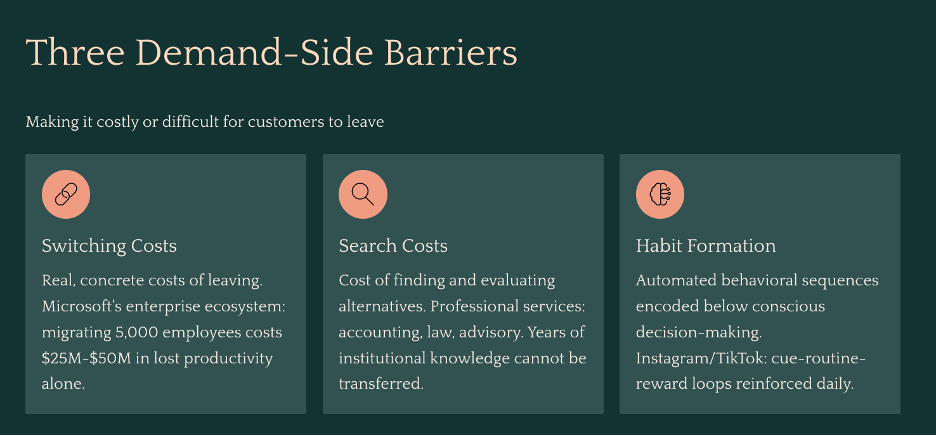

Demand-side barriers work by making it costly or difficult for customers to leave, regardless of how good a competitor’s product becomes. There are three distinct mechanisms through which this happens.

The Cost of Switching

The most direct demand-side barrier is when leaving you imposes a real, concrete cost on the customer — one large enough that a competitor’s pricing or feature advantage cannot rationally justify bearing it.

The clearest example in the world today is Microsoft’s enterprise ecosystem. Picture a company of 5,000 employees. Over the past decade, they have built their entire operational infrastructure on Microsoft’s stack. Every employee logs in through Azure Active Directory, the identity management system that serves as the digital front door to every application in the company. Their communication runs on Teams — years of chat history, meeting recordings, project channels, and file threads. Their files live in SharePoint, which the operations team has spent years organising into department-specific sites, permission structures, and workflows. Their email — including institutional correspondence, compliance archives, and client history — runs on Outlook through Exchange. Sales and customer data runs on Dynamics 365. Security policy, device management, and compliance monitoring runs through Microsoft Intune and Microsoft Defender, configured over years to match the company’s specific legal and regulatory requirements.

Now a competitor — say, Google Workspace — approaches with a compelling pitch. Better collaboration tools. Competitive pricing. Modern interface.

The IT director does not evaluate this as a features comparison. They evaluate it as a risk and cost assessment. To switch, the company would need to migrate five years of email history from Exchange to Gmail — not just the emails, but the rules, the shared mailboxes, the calendar integrations. They would need to rebuild every SharePoint site in Google’s equivalent tools, recreating the permissions structure, the workflows, and the institutional organisation that hundreds of employees built over years. They would need to replace Azure Active Directory as the identity layer — which means reconfiguring the sign-on credentials of every third-party application in the company that currently authenticates through Microsoft. They would need to retire Teams and migrate to Google Meet, accepting the permanent loss of all chat history, all meeting recordings, and the entire thread of internal institutional memory that lives in those channels. And for each of the 5,000 employees, they would need to fund retraining: not just a one-hour tutorial, but the weeks of reduced productivity and the months of re-establishing habitual fluency that comes with a platform transition.

Before a single consulting fee has been paid, the estimate typically runs between $5,000 and $10,000 per employee in lost productivity, training cost, and project management overhead alone. For a 5,000-person company, that is $25 million to $50 million — and 12 to 18 months of organizational disruption — in exchange for saving perhaps $5 to $10 per user per month in licensing fees. The math does not work. It almost never works. And Microsoft knows this, which is why the pricing strategy for Microsoft 365 is not to be the cheapest option. It is to be embedded deeply enough that the cost of evaluating alternatives is itself prohibitive, long before the cost of actually switching is even reached.

This is what switching cost looks like as a structural moat. The product does not need to win on features every year. It simply needs to ensure that the cost of replacing it grows faster than any competitor’s ability to offer a reason to bear it.

The Cost of Searching

The second demand-side barrier is less discussed but equally powerful in the right context: the cost to the customer of finding and evaluating an alternative, independent of the cost of switching to it.

This barrier is most naturally present in services — and understanding why helps clarify where to look for it.

When you buy a physical product, you can evaluate it before committing. You can read reviews from thousands of prior customers, examine the specifications, return it if it fails to deliver. The information required to make a confident purchase decision is largely available before you spend a dollar.

Services are fundamentally different. A service is, by nature, intangible and experienced only after the relationship begins. More importantly, the quality of most services depends on context that is specific to you: the lawyer needs to know your case history, the accountant needs to know your business structure and tax position, the financial advisor needs to know your risk tolerance and long-term goals, the IT support provider needs to know your systems architecture. This institutional knowledge cannot be transferred completely to a new provider — it must be rebuilt, imperfectly and over time, at the new provider’s learning curve and at your expense.

Consider a mid-sized business that has worked with the same accounting firm for eight years. The firm knows the nuances of the business’s industry, the history of every tax position ever taken, the relationships with local tax authorities, and the particular sensitivities of the founders’ personal and corporate finances. To switch firms, the business does not simply find a new accountant and hand over a spreadsheet. They spend months transferring institutional context that can never be fully conveyed. They pay the new firm to learn what the old firm already knows — at full billing rates during the learning period. And they accept a period of elevated risk: the new firm, unfamiliar with the specifics of the business, is more likely to miss something in year one than the incumbent who has seen eight years of the business’s full picture.

The search cost here is not just the effort of finding an alternative. It is the cost of the information asymmetry the customer cannot resolve before committing. You cannot know whether the new firm is as good as the incumbent until you have already been inside the relationship for a year or more. A competitor could be genuinely superior on every measurable dimension and the rational customer would still require a very compelling reason to absorb the evaluation risk.

This is why professional services firms — accounting, law, insurance brokerage, medical practices, financial advisory — often retain clients for decades with minimal active effort to retain them. The cost of searching for and validating an alternative is simply high enough that departure requires an extraordinary catalyst. A minor service improvement from a competitor does not clear that bar. The incumbent collects the revenue of a deeply embedded relationship while a new entrant must offer a step-change in value just to get the customer to begin an evaluation.

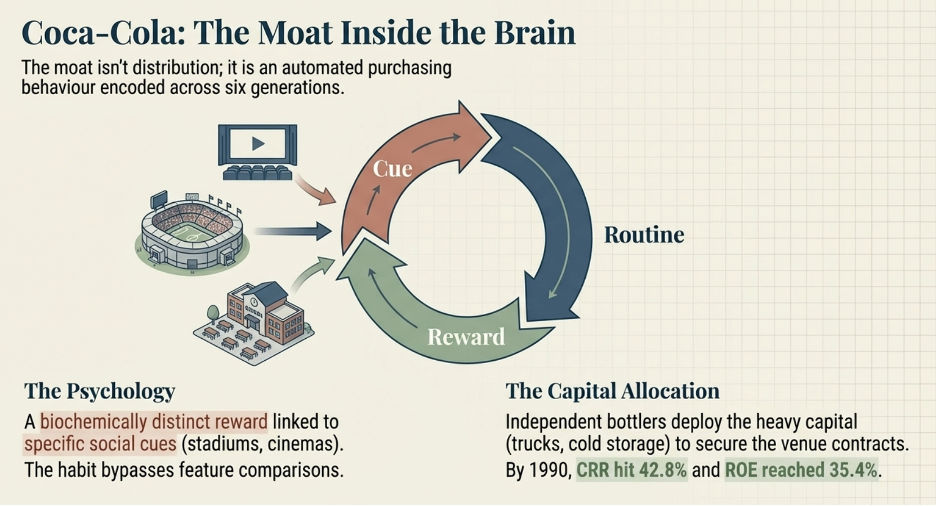

Habit

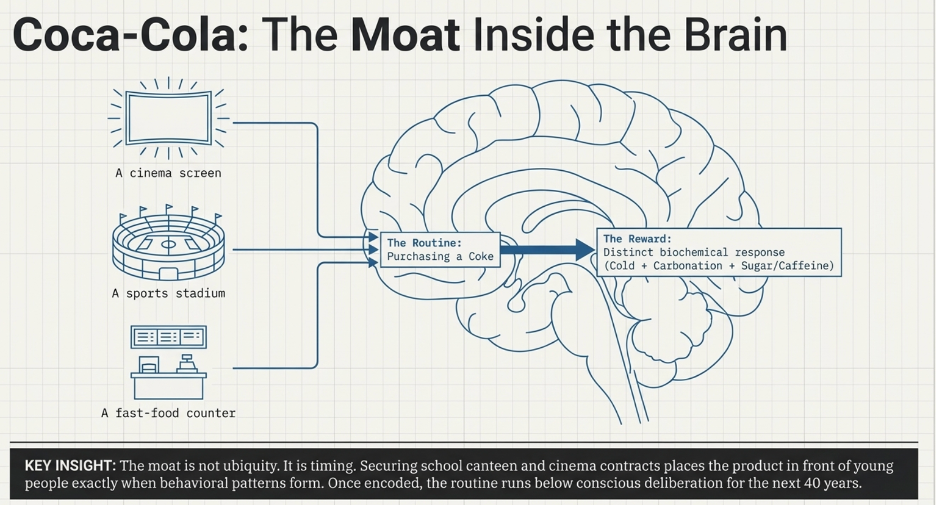

The third demand-side barrier is the deepest, because it operates entirely below the level of conscious decision-making. When a habit is fully formed, the customer is not choosing your product each time they reach for it. They are executing an automated behavioral sequence that was encoded years earlier and now runs without deliberation.

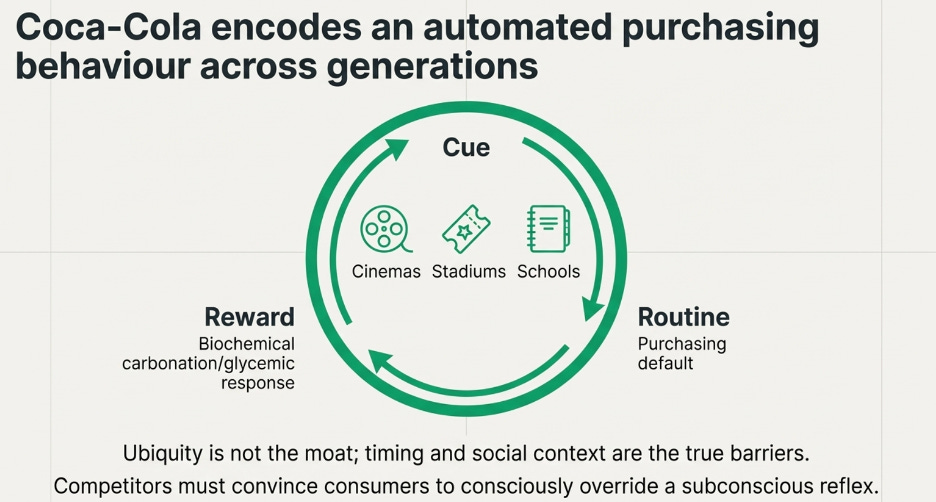

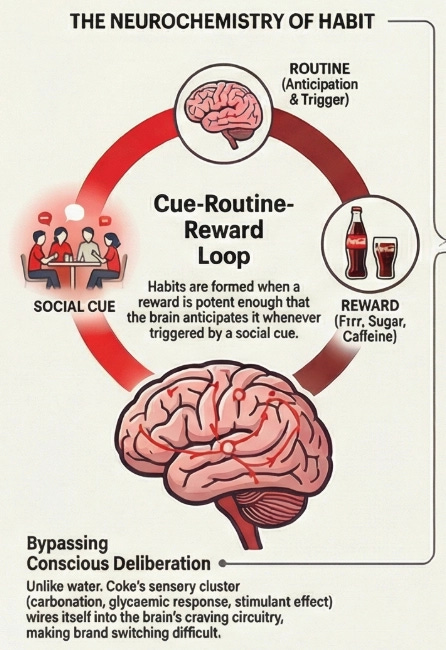

The psychology behind this was formalised by behavioural researcher Charles Duhigg, drawing on decades of neuroscientific work: habits form through a three-part loop. A cue in the environment triggers a routine, and a reward follows. Repeat the loop enough times in enough contexts, and the brain internalises the routine as the automatic response to the cue. The decision is, in the most literal neurological sense, removed from conscious consideration. The cue appears. The behaviour fires.

Coca-Cola is the most thoroughly studied example of a brand that engineered this loop at a civilisational scale — and it is examined in detail in the company case study. But the mechanism is not unique to consumer beverages. A second example makes the psychological machinery visible in a completely different context.

Consider what happens when you open Instagram or TikTok. You pick up your phone during a moment of downtime — standing in a queue, lying in bed before sleep, waiting for a meeting to start — and within seconds you are scrolling. You did not make a decision to scroll. You responded to a cue (boredom, idle hands, a moment of transition) with a routine (open the app, begin scrolling) that produces a reward. The reward, critically, is variable. Sometimes you scroll past ten unremarkable posts and find nothing. Sometimes the next post is something that makes you laugh, or feel something, or discover something you wanted to know. You never know when the reward will arrive. That unpredictability is not a design flaw. It is the engine.

B.F. Skinner, the behavioural psychologist, demonstrated this decades before smartphones existed. In his experiments, he placed rats in a chamber with a lever. One group of rats found a food pellet every time they pressed the lever — consistent reward. Another group found a pellet unpredictably — sometimes after one press, sometimes after ten, sometimes after fifty. The second group pressed the lever far more frequently, far more persistently, and far more compulsively than the first. Predictable rewards produce moderate, manageable behavior. Variable rewards produce compulsive, difficult-to-extinguish behavior.

The red notification badge on a social media app is a manufactured cue built on exactly this principle. Every time you see it, the brain anticipates a variable reward — a like, a comment, a message, something interesting — and fires the routine before conscious deliberation has a chance to intervene. The app has engineered the cue-routine-reward loop to run automatically, at high frequency, across dozens of daily moments. The habit does not require the app to be the best app. It requires the loop to have been established early and reinforced often enough that the brain treats it as the default response to a set of triggers that occur dozens of times every day.

For a competitor seeking to displace a habituated user, the challenge is not building a better product. It is producing something compelling enough to override an automated behavioral response, consistently, across every context in which the trigger fires, for long enough that the new behavior becomes the new habit. That is an extraordinarily high bar. Most competitors never clear it, and the ones who do typically achieve it only by exploiting a paradigm shift large enough to make the existing habit context-inappropriate — the way smartphones made the desktop browser habit irrelevant for most daily use cases.

The Supply Side: Three Reasons Competitors Cannot Replicate You

Supply-side barriers work differently. Instead of making it hard for customers to leave, they make it structurally difficult or impossible for competitors to build what you have built — even with the capital, talent, and determination to try.

Access to Inputs — Either Exclusively or at a Cost Others Cannot Match

The most direct supply-side advantage is controlling something a competitor needs but cannot acquire at equivalent cost or at all.

The most striking example in modern industry is ASML, the Dutch company that manufactures the extreme ultraviolet lithography machines required to produce the world’s most advanced semiconductors. ASML is the only company on earth that can build these machines. The barriers are not primarily financial — a well-capitalised competitor could theoretically attempt to build a rival programme. The barriers are structural: ASML holds over 4,000 patents directly related to EUV lithography, and those patents protect only the parts of the process that can be disclosed. Beneath the patents sits three decades of accumulated engineering knowledge — the specific mirror coatings, the plasma source chemistry, the vibration isolation techniques, the yield optimisation protocols — that exist in the institutional memory of the company’s engineers and the tacit knowledge embedded in its production processes. A competitor could hire a team of brilliant optical engineers, invest $10 billion, read every ASML patent, and still spend a decade arriving at process maturity that ASML achieved through 30 years of continuous refinement. The knowledge cannot be transferred by acquisition or reverse-engineered from the product. It can only be built through time, and time is exactly what a competitor does not have.

A simpler but equally vivid version of exclusive access is geographic: a Grand Cru vineyard in Burgundy, France. The wine produced from a specific plot in Romanée-Conti sells for thousands of dollars a bottle because the specific combination of soil composition, drainage, microclimate, and centuries of agricultural understanding that produces the wine exists in that precise plot of land and nowhere else on earth. A competitor with unlimited capital cannot replicate the supply. They cannot move the soil. They cannot recreate the microclimate. They can purchase an adjacent plot, but the terroir is not transferable. The supply advantage is, in the most literal sense, geographically exclusive.

Government Relationships and Contracts

Regulatory protection and established government relationships are a supply-side barrier that most investment frameworks underappreciate, partly because they are slow to develop and invisible until they are tested.

Lockheed Martin’s position in US defence contracting illustrates the mechanism. To compete for a major military aircraft programme — the kind of multi-decade contract that involves classified systems integration, crew safety certification, and national security infrastructure — a new entrant would need to build and maintain facilities certified for handling classified information, employ personnel who have individually undergone extensive government security clearance processes that take years and cannot be accelerated, establish a performance track record with defence procurement agencies through smaller contracts before being trusted with larger ones, and navigate a procurement system that is, by design, biased toward established contractors because the political and operational consequences of contractor failure in defence are catastrophic in ways that failure in a commercial context is not. A Senator whose district contains a Lockheed facility and whose constituents’ employment depends on a programme is not a neutral evaluator of whether a new entrant deserves consideration.

None of this is corruption or impropriety. It is the natural consequence of operating in a domain where trust is built over decades, verified through regulatory frameworks that take years to navigate, and grounded in a track record that new entrants structurally cannot possess. The barrier to entry is not capital. It is time and institutional standing, and those are not available for purchase at any price.

Patents and Proprietary Know-How

Patents and proprietary know-how are supply-side barriers of different character and duration, and distinguishing between them matters for how long you expect the protection to last.

A patent is explicit, legally enforced, and time-limited. It grants the holder a monopoly on a specific innovation for a defined period — typically 20 years — after which it enters the public domain. The value of a patent-based moat is therefore calculable and finite. This is precisely the circumstance in which the PE duration table discussed in Part One becomes a precision instrument rather than a directional guide: count the years until expiry, map them against the earnings growth rate, and assess whether the current valuation is justified by the duration that remains. The certainty cuts in both directions — a business with a moat that expires in three years is a business with three more years of protected earnings, and the market should price it accordingly.

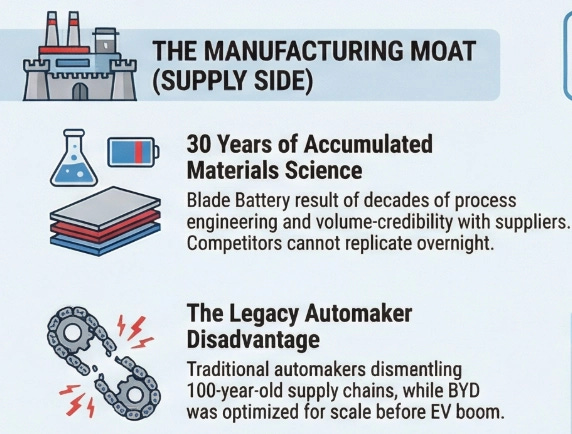

Proprietary know-how is altogether different, and most of the time, better. It is not registered, not legally enforced, and has no defined expiry date. It exists in the accumulated process knowledge, manufacturing expertise, material science understanding, and institutional memory that a company builds over years of doing something at scale. Intel’s sustained struggle to match TSMC’s semiconductor manufacturing yields, despite having world-class engineering talent and decades of experience, illustrates the point: the process knowledge embedded in TSMC’s factories is not something that can be replicated by hiring smart engineers or studying published research. It lives in the specific sequence of decisions, optimisations, and hard-won failure modes that a manufacturing organisation has navigated over 30 years of running these processes continuously. That knowledge, in the most literal sense, cannot be transferred. It can only be accumulated through time, and no amount of capital investment shortens the timeline.

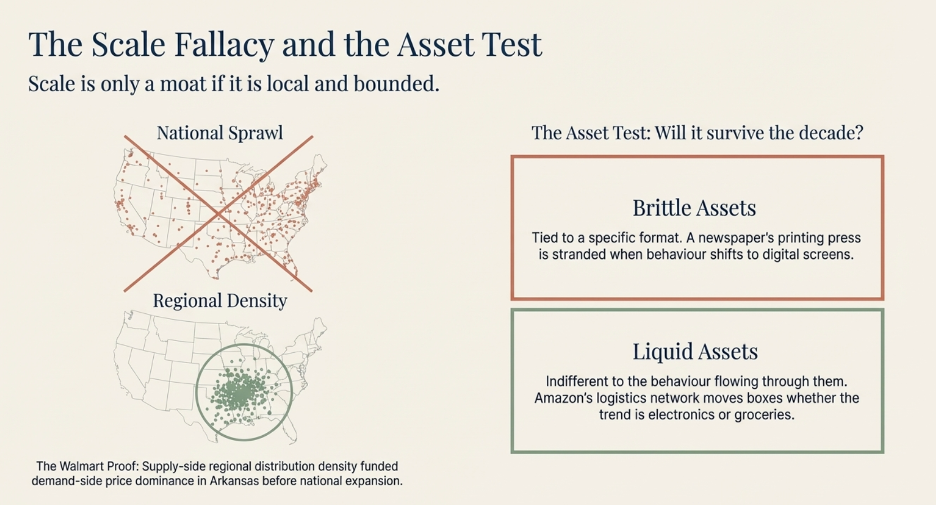

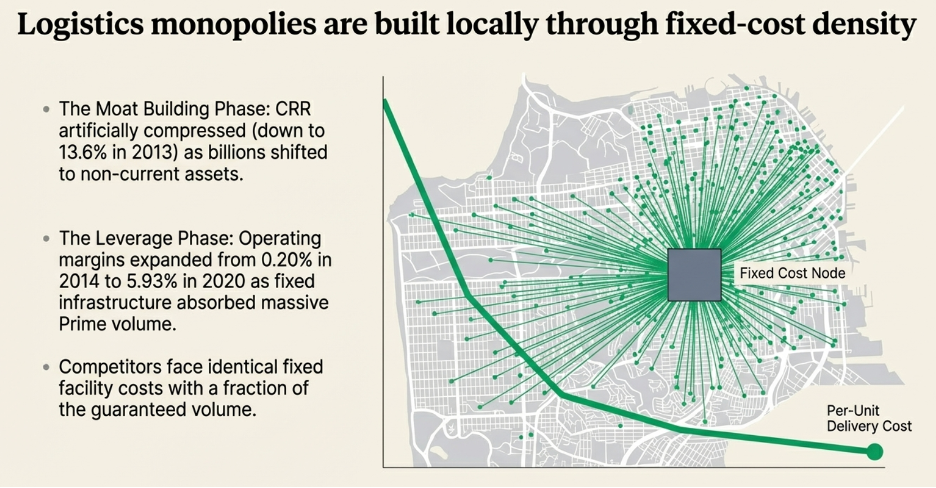

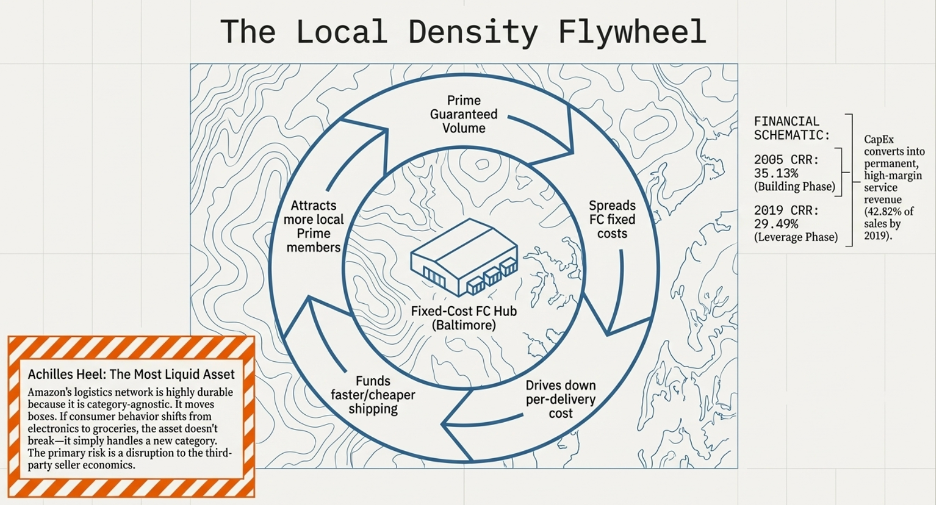

Economies of Scale: The Third Force, and Why It Is Always Local

Economies of scale are the most frequently cited and most widely misunderstood source of competitive advantage. The mistake is treating scale as a general property — assuming that being bigger confers a cost advantage over smaller competitors simply by virtue of size. A large company with $50 billion in revenue can be disrupted just as decisively as a small one if the underlying economics of the market are contestable. Scale produces a structural cost advantage only in a specific condition: when that scale is concentrated within a defined, bounded market — a geography, a customer segment, a distribution channel — and the cost structure that results from dominating that bounded market cannot be replicated by any competitor without first winning the market share that nobody will hand them.

This is the insight that most scale-based analyses miss entirely. The moat does not come from being large in the aggregate. It comes from being dominant in a specific arena, and then using the economic advantages that dominance produces to make that dominance self-reinforcing before expanding to the next arena. The sequence matters as much as the outcome.

Walmart is the clearest possible demonstration of this dynamic — but only if you understand where Walmart actually started.

In 1962, Sam Walton opened his first Walmart in Rogers, Arkansas. Not in New York. Not in Chicago. In a small town of roughly 5,000 people in northwest Arkansas, a market that every other major retailer had looked at and passed on as too small to justify the investment. Walton’s thesis was precisely the opposite: small towns were underserved, customers in those markets had fewer alternatives, and a retailer who dominated a cluster of small towns in a single region could build the local scale to negotiate better supplier terms than the general stores and small-town retailers who currently served those customers — without ever having to go head-to-head with Sears or Kmart in the large markets where those competitors were entrenched.

For the first decade, Walmart barely left Arkansas. By the early 1970s, Walton had opened stores in a tight cluster across Arkansas and the immediately adjacent corners of Missouri and Oklahoma — a radius of roughly 300 miles from his original store. This geographic concentration was not accidental and it was not a failure to grow faster. It was the strategy. By saturating a specific region before moving on, Walmart built something that a retailer spread thinly across a national footprint could not: regional distribution density. A single distribution centre in Arkansas could serve 30 stores within a few hours’ drive, which meant lower logistics costs per store, faster restocking, and less inventory waste than a competitor running stores so dispersed that each required its own supply chain infrastructure. The regional density was itself a supply-side advantage, and it was only achievable because Walton chose depth over breadth in the early years.

This regional dominance also meant that Walmart’s purchasing volume — while modest at a national level in the 1960s — was large enough relative to the suppliers serving those specific markets to secure meaningfully better pricing than any of the local competitors could. A general store in a small Arkansas town buying a hundred hammers a year had no leverage over a hardware supplier. Walmart buying for thirty stores in the same region had considerably more. The scale advantage was local and bounded, but within those bounds it was real and compounding.

Now watch what happened when those early advantages — regional distribution density and volume-based supplier pricing — were applied to the demand side in each new town Walmart entered.

Before Walmart arrived, a typical small town had its needs served by a collection of independent local retailers: a hardware store, a grocery, a pharmacy, a clothing shop. Each had a local monopoly in its category. Customers knew these stores well, had been shopping there for years, and faced enough search cost and ingrained habit that switching to an alternative — even if one existed — simply was not worth the disruption for routine purchases. The friction of replacement was modest but sufficient. It had sustained healthy margins for these businesses for decades.

Walmart arrived with a supply-side cost structure that none of them could match. Because Walmart’s regional purchasing volume allowed it to negotiate lower input costs than any single-category local retailer, it could price below what those retailers needed to charge just to cover their own cost of goods. The local hardware store was not losing to a better-run hardware store. It was losing to a competitor whose cost of goods was structurally lower before either of them opened their doors each morning.

Those lower prices triggered something on the demand side. A family that previously made separate trips to the hardware store, the grocery, and the pharmacy began consolidating into a single Walmart visit — not just because the prices were lower, but because the convenience of one stop replaced the search cost and time cost of three. As that consolidated shopping trip repeated across weeks and months, the behavior began to encode itself as habit. The cue — the shopping list, the errand — now automatically produced the Walmart trip as the routine response. Each repetition deepened the groove. The local specialty stores, stripped of foot traffic, faced declining revenue. Declining revenue meant even less purchasing volume, which meant worse supplier terms, which meant thinner margins, which meant either higher prices or reduced inventory — neither of which helped compete against a lower-priced, better-stocked alternative that customers were already habituated to. Most closed within a few years.

Once the local competitors were gone, a new entrant trying to open a competing general retailer in that town faced an economy that had been reshaped entirely around Walmart’s economics. To match Walmart’s prices, the new entrant needs Walmart’s supplier terms. To get Walmart’s supplier terms, it needs Walmart’s purchasing volume. To achieve Walmart’s purchasing volume, it needs to win customers away from a store those customers have been using habitually for years. To win those customers, it needs to offer lower prices or a dramatically better experience — but it cannot offer lower prices because its input costs are structurally higher, and a better experience alone is not compelling enough to break a well-established routine.

The loop is closed. The supply-side cost advantage funded the price leadership that built the demand-side habit. The habit eliminated the competition. The elimination of competition locked the volume advantage permanently within that geography. And the locked volume continued compounding Walmart’s supplier leverage as it replicated the same playbook — local saturation first, regional density second, national expansion third — in market after market across the country.

This sequencing is the part that most analyses of Walmart’s success overlook. The national scale that Walmart eventually achieved was not what created the moat. It was the aggregate result of hundreds of individually won local markets, each of which had been approached with the same strategy: build regional density to the point where the supply-side and demand-side advantages start feeding each other, let the loop run until the local competition is gone, and then move to the next cluster. The moat at the national level is formidable precisely because it was built local-first, one bounded market at a time, in a way that made each individual market self-sealing before the next was attempted.

The general principle the Walmart story illustrates — and the reason it belongs in a framework built around barriers to entry — is that economies of scale become a genuine moat only when they produce a combination of supply-side cost advantage and demand-side captivity within a specific, bounded arena, and when achieving that combination takes long enough that any competitor arriving later must absorb years of structural losses just to reach the starting line. Being large in the abstract is not a moat. Being dominant in a specific place, in a way that took years to build and that the economics of the market make nearly impossible to replicate — that is.

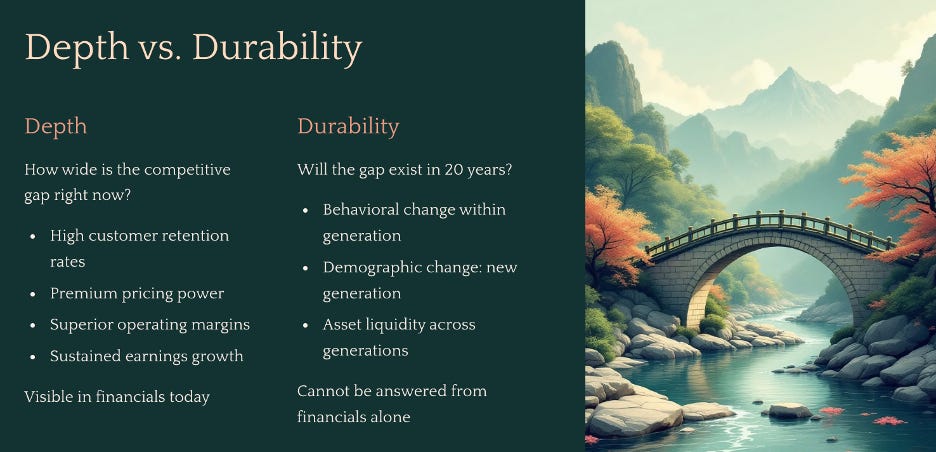

Strong Today Is Not the Same as Durable

Before the moat analysis is complete, there is one distinction that determines whether you are building a ten-year thesis or a thirty-year one — and it is the distinction that most structural analysis either skips or treats as an afterthought.

Depth describes how wide the competitive gap is right now. A business with genuinely high switching costs retains customers at high rates and commands premium pricing. A business with exclusive supply advantages sources inputs at costs competitors cannot match, producing structurally superior operating margins. A business with dominant scale within a bounded market has a per-unit cost structure that no smaller competitor can approach. Depth is visible in the financials — in the Cash Reinvestment Rate, the return on equity, the operating margins relative to the industry, and the earnings growth rate sustained over time. Depth tells you how strong the moat is today.

Durability asks whether the same gap will still exist in 20 years. And that question cannot be answered from the financial statements, because the financials only reflect what has already happened. Durability depends on whether the assets that create the moat today are adaptable to the behavioral and demographic changes that will arrive over the holding period — changes that are inevitable in some form, even when their specific direction is impossible to predict.

These are two entirely separate assessments, and confusing them is one of the most expensive analytical mistakes an investor can make.

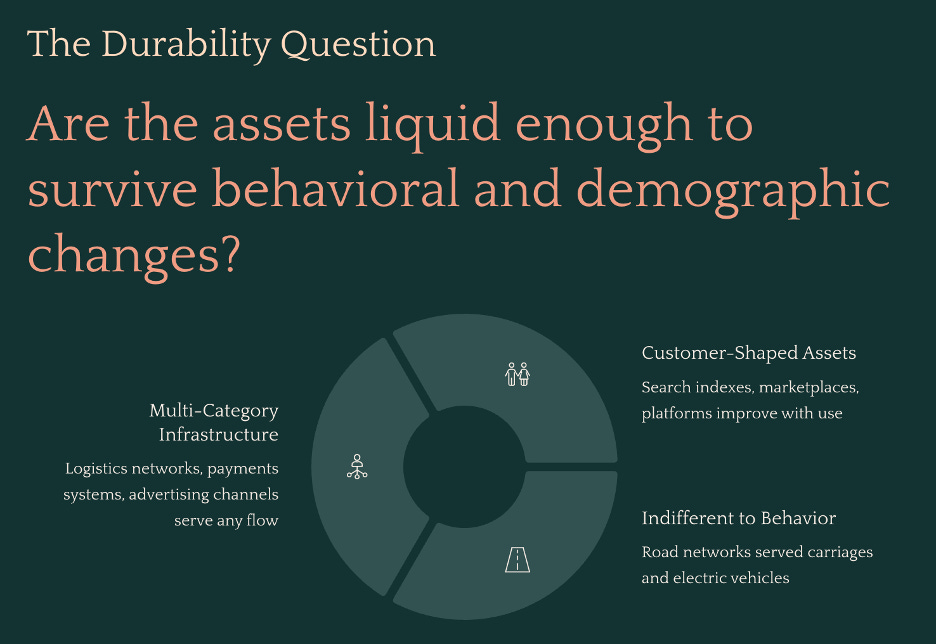

Why Strong Moats Fail: The Two Threats to Durability

A moat erodes through two distinct channels, and understanding both matters because each one requires a different kind of vigilance.

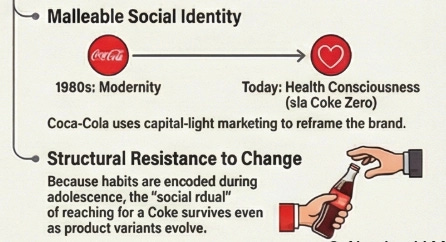

The first is behavioral change within a generation. The same customers stop doing something the way they used to. They do not disappear, and the underlying need does not go away — they simply begin satisfying it differently. When this happens, the question is whether the asset that served the old behavior can serve the new one.

The second is demographic change — a new generation arrives having never formed the habit in the first place. This is more permanent than behavioral change, because there is no existing relationship to migrate and no habit to redirect. The business must earn the new demographic from scratch, competing against whatever captured that generation’s attention during the years when habits were forming. By the time the shift is large enough to appear in the financials, that window has usually already closed.

The regional newspaper failed through both channels at once. Existing readers migrated to digital screens. Each new generation arrived without ever forming the morning paper ritual. The classified advertising network transferred to free platforms. The readers did not stop wanting news. The advertisers did not stop wanting to reach buyers. But the assets — printing infrastructure, physical distribution, a newsroom built around daily print cycles — were so specifically tied to the physical format that none of them could serve those needs in the new form they took. The format changed. The assets could not follow.

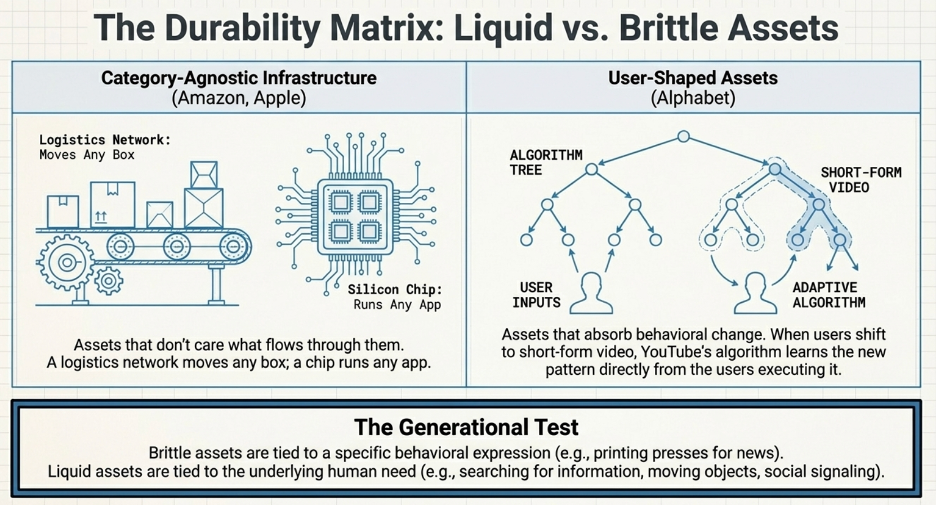

The Holy Grail: What Makes an Asset Liquid Across Generations

The question that determines whether a moat is worth holding for a decade or three decades is not “is this moat strong today?” It is: are the assets that create this moat liquid enough to survive the behavioral and demographic changes that will arrive over the holding period?

A liquid asset is one that serves a fundamental underlying need — or is shaped directly by customers themselves — such that changes in how people satisfy that need route through the same asset rather than past it. A brittle asset is one tied so specifically to one behavioral expression of a need that when the expression changes, the asset becomes irrelevant.

The road network is the most durable illustration of a liquid asset in history. The infrastructure built for horse-drawn carriages in the 19th century now carries electric vehicles, autonomous delivery robots, and long-haul freight. The builders anticipated none of these use cases. It did not matter, because the underlying need the road serves — moving people and goods through physical space — has not changed and will not change. Every behavioral shift in transportation technology has routed through the same physical infrastructure in a new form. The asset is indifferent to the behavior built on top of it, because the behavior changes but the fundamental need does not.

Two types of assets reliably exhibit this property.

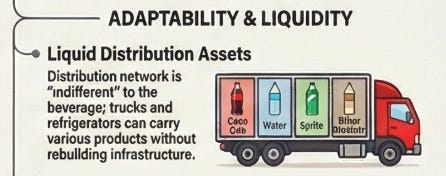

The first type serves the general population across multiple categories and behavioral contexts. A logistics network, a payments infrastructure, an advertising distribution channel — these assets are indifferent to what flows through them. Amazon’s fulfillment network moves boxes. It does not know or care what is inside them. A shift from electronics to groceries to pharmaceuticals does not require the network to be rebuilt. It requires the network to handle a new category of object, which the infrastructure is already designed to do. Walmart does the same. It does not care if customers are shopping physically or following the e-commerce trend. The behavioral shift simply gives the asset new things to do.

The second type is assets that are shaped by customers themselves through their use of it. A search index that improves with every query. A marketplace that becomes more valuable with every transaction. A social platform that becomes more relevant with every post. These assets do not just survive behavioral change — they absorb it, because the people driving the change are the same people contributing to the asset. When consumer behavior shifts toward a new content format, YouTube’s recommendation engine learns the new format from the consumption patterns of the users migrating toward it. The asset reshapes itself in the direction of behavioral change because the users reshaping their behavior are simultaneously reshaping the asset.

The durability question, applied to every business in this paper, is therefore: when consumer behavior shifts in ways we cannot precisely predict, does the asset that creates this moat move with the shift or get stranded by it? The cases that follow examine each business against this test — not to confirm a foregone conclusion, but to identify precisely where the edges of each moat’s durability sit, because those edges define the conditions under which the long-term thesis breaks.

Part Three: Identifying the High-Growth Compounder

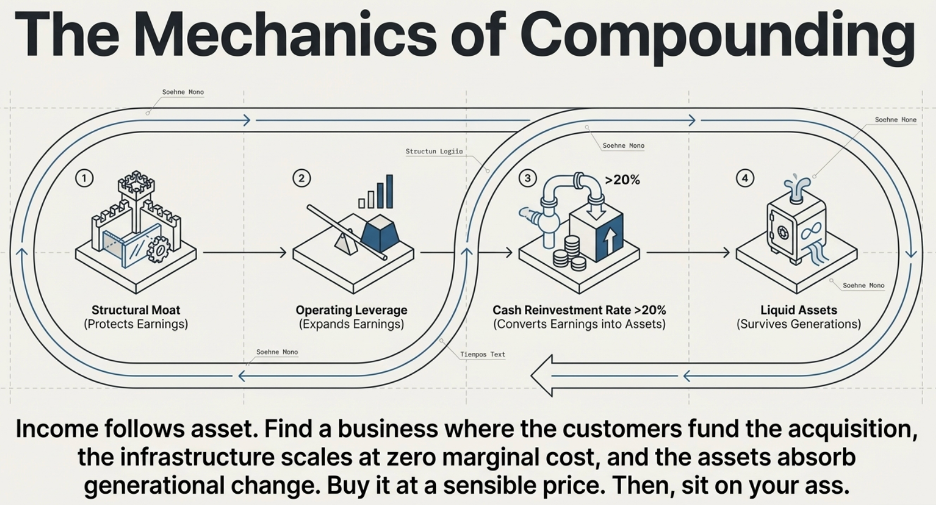

Start With the Most Basic Truth in Business

Before even thinking about a spreadsheet, there is a first-principles observation that almost every model eventually obscures: a business earns money because it has productive assets to earn it from. Income follows asset. Always. No exceptions.

A property generates rental. A factory generates income because it converts raw materials into products customers pay for. A software platform generates income because it has a codebase, a customer base, and the infrastructure to serve them. A brand generates income because it commands pricing power with customers who trust it. Strip away the accounting and the complexity, and every dollar of revenue a business earns traces back to a productive asset that made the earning possible. No asset, no income. More productive asset, more income.

This is not a novel insight. It is simply the foundational economic reality that most financial analysis works several layers above, dealing with revenue growth rates, earnings multiples, and margin trends without ever returning to the underlying mechanism. But when you hold the principle clearly — income follows asset — it changes the question you ask about a business. Not “how much did it earn last year?” but “how fast is its productive asset base growing, and how efficiently is each new asset being deployed?” Because those two answers will tell you, more precisely than almost any other metric, how fast the income can compound from here.

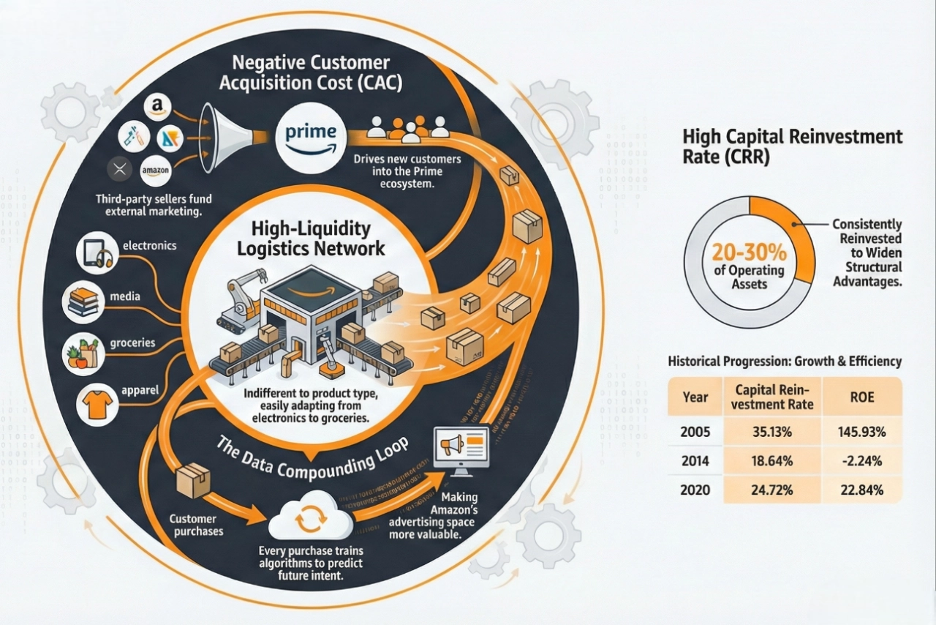

The Reinvestment Engine

Now follow the logic one step further.

If income follows asset, and a business earns income every year, then the critical variable is what happens to that income once it is earned. The business faces a choice: return the income to shareholders as dividends, or reinvest it into more productive assets. If it reinvests, the asset base grows. A larger asset base produces more income next year. That income can be reinvested again into more productive assets. Each cycle, the productive base expands, and the income expands with it.

This is compounding — not as a financial abstraction, but as the literal mechanics of how a business’s income-generating engine gets larger over time. And the speed at which this compounding runs depends on two things: how much of the income is reinvested, and how productive each dollar of reinvestment is. A business that reinvests 10 cents of every earned dollar builds its asset base far more slowly than one that reinvests 50 cents. And a business that generates $2 of future income from every $1 reinvested compounds far faster than one that generates $1.10.

The question for an investor evaluating a potential long-term holding is therefore simple to state and demanding to answer: how efficiently is this business converting its current earnings into productive assets that will generate future earnings? What is the reinvestment rate, and what is the quality of that reinvestment?

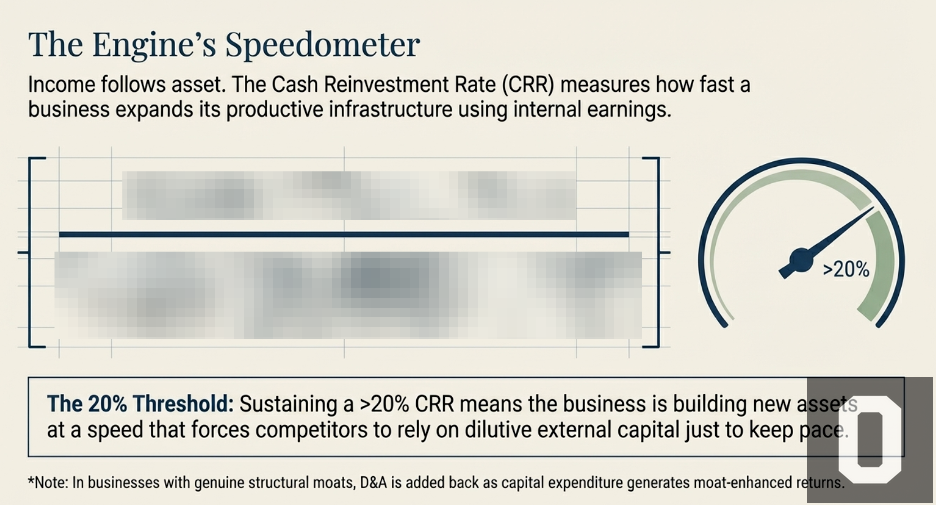

This is precisely what the Cash Reinvestment Rate measures.

The Cash Reinvestment Rate: The Engine’s Speedometer

The CRR is calculated as follows:

[To be revealed in subsequent writings]

The numerator is the earnings available for reinvestment. The denominator is the total productive asset base — the accumulated result of every reinvestment decision the company has ever made, expressed as the capital currently deployed in the business.

Dividing one by the other gives you the rate at which the business is rebuilding and expanding its own productive infrastructure from a single year’s earnings.

A CRR of 20% means that in a given year, the business is adding productive assets equivalent to 20% of its existing deployed capital base — funded entirely from that year’s earnings, with no external capital required. Sustained over ten years, that is a business more than doubling its productive asset base from internal earnings alone, without issuing shares or taking on debt. The compounding is entirely self-funded.

The D&A adjustment deserves a careful note because it is conditional, not universal. This finding was made when I did an analysis on Amazon. Depreciation and amortisation are accounting charges that reduce reported operating income but do not represent cash leaving the business. In a business without a durable moat — one where new capital investment simply replaces ageing assets at replacement cost — depreciation is a real economic cost and adding it back would overstate the earnings available for genuine growth. But in a business with a strong structural moat, new capital investment generates returns that far exceed the replacement cost it is nominally categorised under. A new Amazon fulfilment centre, built on top of Amazon’s existing logistics infrastructure, brand equity, and Prime membership base, does not earn a replacement-cost return. It earns a moat-enhanced return. In those cases, adding back D&A gives a more accurate picture of the true earnings power available for compounding. This is why the qualitative moat analysis in Part Two is not a parallel exercise — it is a prerequisite. Without it, you cannot correctly calibrate the metric you use to validate it.

Why 20% Is the Threshold

The 20% threshold is not arbitrary. It is the level above which the productive asset base compounds faster than most well-capitalised competitors can match through any combination of borrowing, equity issuance, or operational improvement.

Think about what it means for the competitive gap over time. A business with a 20% CRR is building new productive assets — new customer relationships, deeper technology moats, denser distribution infrastructure, richer institutional knowledge — at a rate that requires a competitor to inject substantial external capital just to keep pace. If a competing business generates a 10% CRR and must raise the remaining 10% through debt or equity issuance to match the growth, its cost of expansion is structurally higher. It pays interest or dilutes shareholders to match what the better-moated business finances from internal earnings at no dilutive cost. Over five years, the gap between these two businesses is not a rounding error. It is a compounding structural divergence that widens every year the conditions persist.

A rising CRR over the holding period is the financial signature of a moat that is working. It means the business is generating progressively more earnings per dollar of deployed assets — the competitive advantage is deepening, not eroding, and the compounding engine is running faster rather than slowing. When the CRR begins to contract, it is the earliest quantitative signal that something structural may be changing — competition is intensifying, reinvestment opportunities are becoming less productive, or the moat is narrowing. The qualitative thesis and the CRR should be telling the same story. When they diverge, the divergence is the most important question to answer.

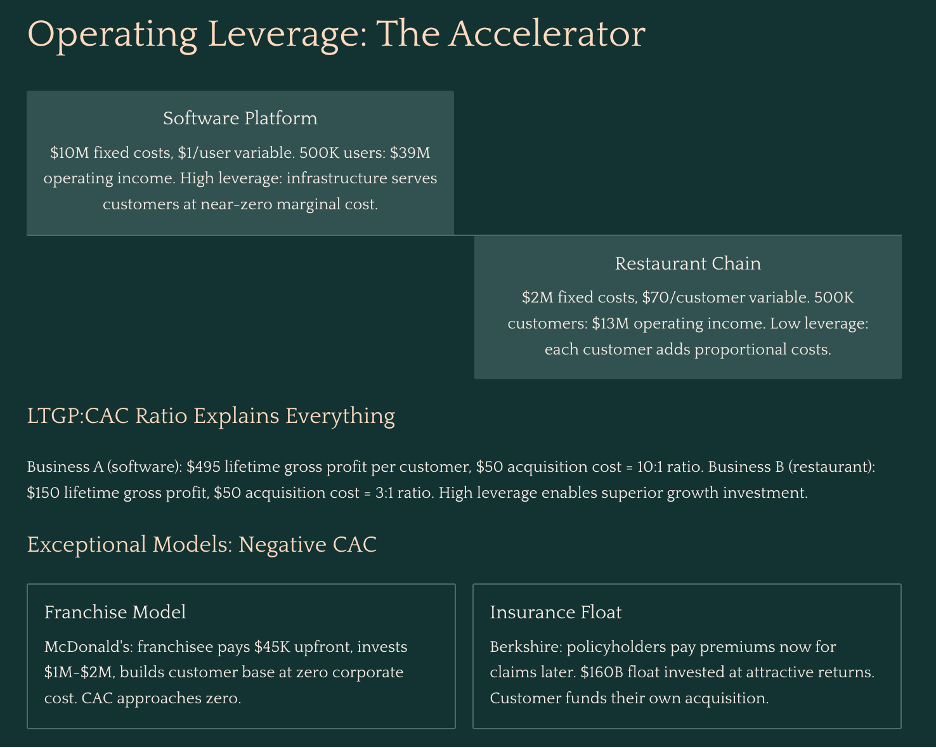

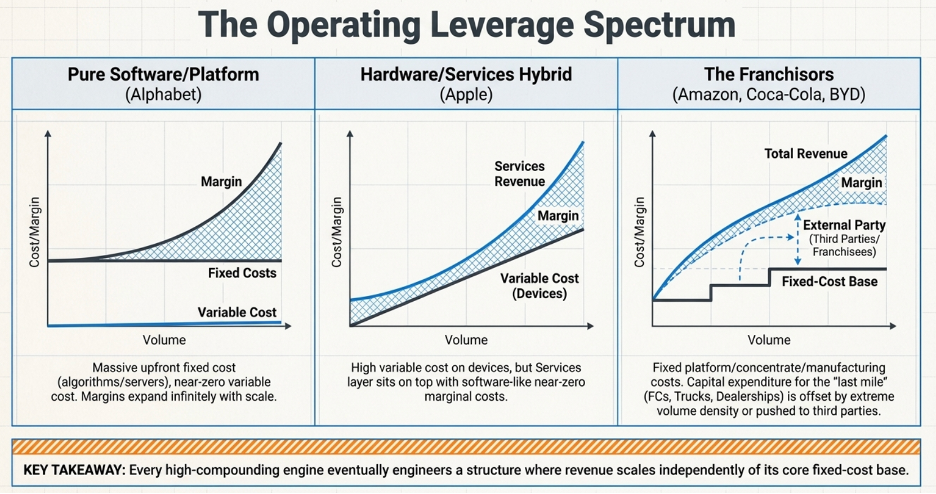

Operating Leverage: The Mechanism That Makes the CRR Accelerate

To understand why operating leverage is the defining characteristic of the highest-compounding businesses in the world, start with a simple question you can ask about any business: for every new customer you acquire, how much more does it cost you to serve them?

That question — how much of your cost structure grows with your customer base versus how much stays fixed regardless of how many customers you have — determines almost everything about how profitable a business becomes as it scales.

Two Businesses, One Fundamental Difference

Consider two businesses side by side:

Business A is a software platform. Once the code is written and the servers are running, adding one more user costs almost nothing — roughly $1 per customer per year in incremental infrastructure. Its fixed costs — the engineering team, the servers, the security systems — run $10 million per year whether it has ten customers or ten million.

Business B is a restaurant chain. Every additional customer requires food, kitchen labour, and table service. Its variable cost is $70 per customer per year. Its fixed costs — leases, base kitchen staff, management — are $2 million per year.

Now watch what happens as both businesses grow from 100,000 customers to 500,000.

At 100,000 customers, Business A generates $10 million in revenue against $10 million in fixed costs and $100,000 in variable costs. It is barely breaking even. Business B, meanwhile, generates $10 million in revenue against $9 million in total costs, producing $1 million in operating income. At this early stage, Business B looks like the better business.

At 500,000 customers, the picture reverses dramatically. Business A generates $50 million in revenue. Its fixed costs have barely moved — call it $10.5 million with some modest team additions. Variable costs are $500,000. Operating income: $39 million. Business B also generates $50 million in revenue, but its variable costs have grown to $35 million, giving total costs of $37 million. Operating income: $13 million.

Revenue grew five times for both businesses. Business B’s operating income grew thirteen times — a strong result by any measure. Business A’s operating income grew from near zero to $39 million. The gap is not explained by better management or a stronger product. It is explained entirely by cost structure. Business B will always spend $70 to serve a customer who pays $100. Its margin is permanently capped at $30 per customer regardless of how large it becomes. Business A spends $1 to serve a customer who pays $100, and that $1 does not materially change whether there are 100,000 customers or 5 million. Every new customer is almost pure profit layered on top of a fixed cost base that was already paid.

This is what high operating leverage means in practice: a cost structure where the hard, expensive work of building the business is done once upfront, and every customer acquired after that generates disproportionate profit because the infrastructure that serves them already exists.

What This Means for Each Customer Relationship

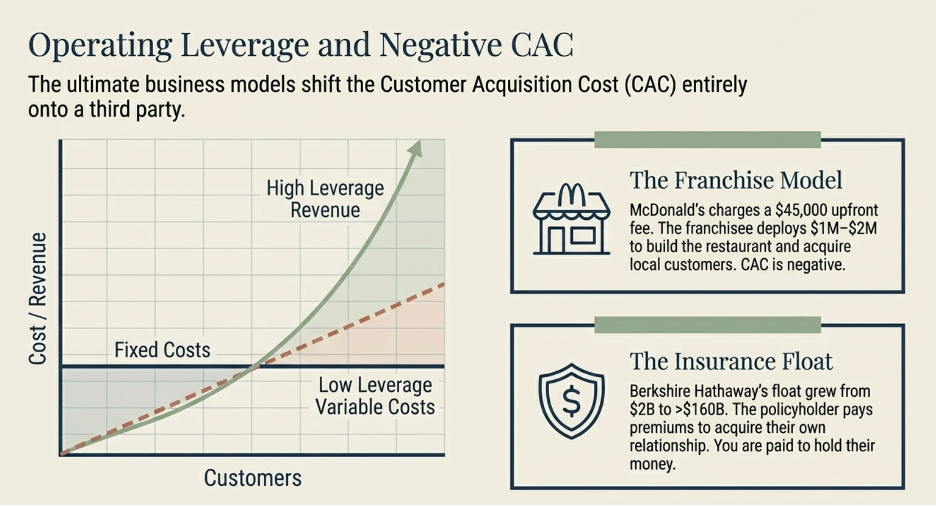

Operating leverage does not just change how profitable a business becomes in aggregate. It changes the economics of each individual customer relationship — and those unit economics are what determine how aggressively a business can afford to grow.

This is where the LTGP:CAC ratio becomes the right lens. Lifetime Gross Profit, or LTGP, is the total gross profit generated by a single customer across the entire duration of their relationship with the business. Customer Acquisition Cost, or CAC, is what it cost to bring them in. The ratio of LTGP to CAC tells you how efficiently the business converts its growth spend into long-term profit.

In Business B, the gross profit per customer per year is $30 — the $100 in revenue minus the $70 variable cost. Over a five-year relationship, LTGP is $150. CAC is $50. The ratio is 3:1. For every dollar spent acquiring a customer, the business earns three dollars of gross profit over that customer’s lifetime.

In Business A, the gross profit per customer per year is $99 — the $100 in revenue minus the $1 variable cost. Over the same five years, LTGP is $495. CAC is still $50. The ratio is just under 10:1. For every dollar spent acquiring a customer, the business earns nearly ten dollars of gross profit.

The practical consequence of this gap is significant. Business A can rationally justify spending far more to acquire each customer than Business B, because the return on that spend is so much higher. If both businesses are competing for the same customer through the same advertising channel, Business A can outbid Business B and still come out ahead. Business A can invest more in product quality to reduce churn. It can offer a better onboarding experience. It can subsidise trials and promotions. All of this is economically rational precisely because the high operating leverage structure ensures the eventual payoff per customer is large enough to absorb a higher upfront cost. The high-leverage business becomes a structurally superior competitor for customers — not because of its product alone, but because its economics allow it to invest in growth at a rate its lower-leverage competitor simply cannot match without destroying its margins.

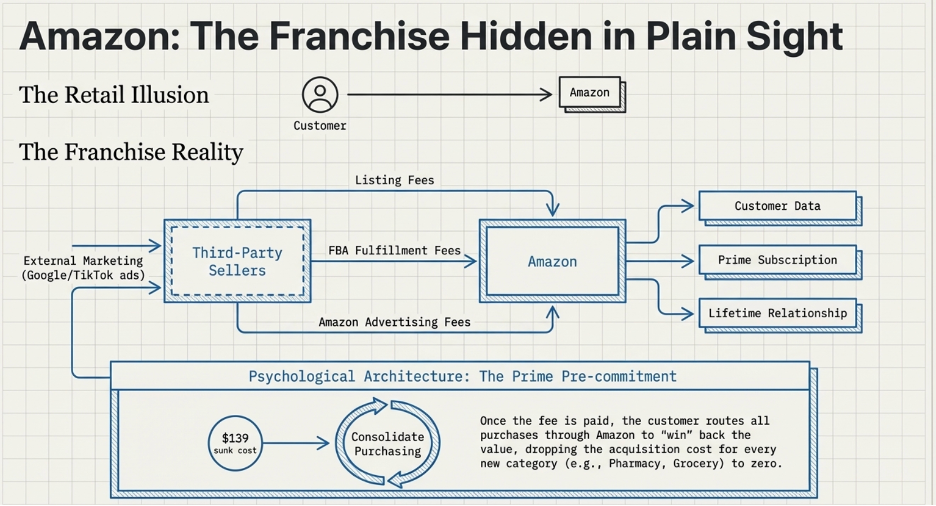

But here is where the most exceptional businesses diverge from even Business A. The businesses with the highest LTGP:CAC ratios in the world are not simply efficient at acquiring customers. They have engineered their model so that the cost of acquiring customers approaches zero — or turns negative entirely, meaning the growth of the business is funded by others rather than by the business itself. Two business models achieve this in different ways, and each one is worth understanding on its own terms before seeing how they all arrive at the same conclusion.

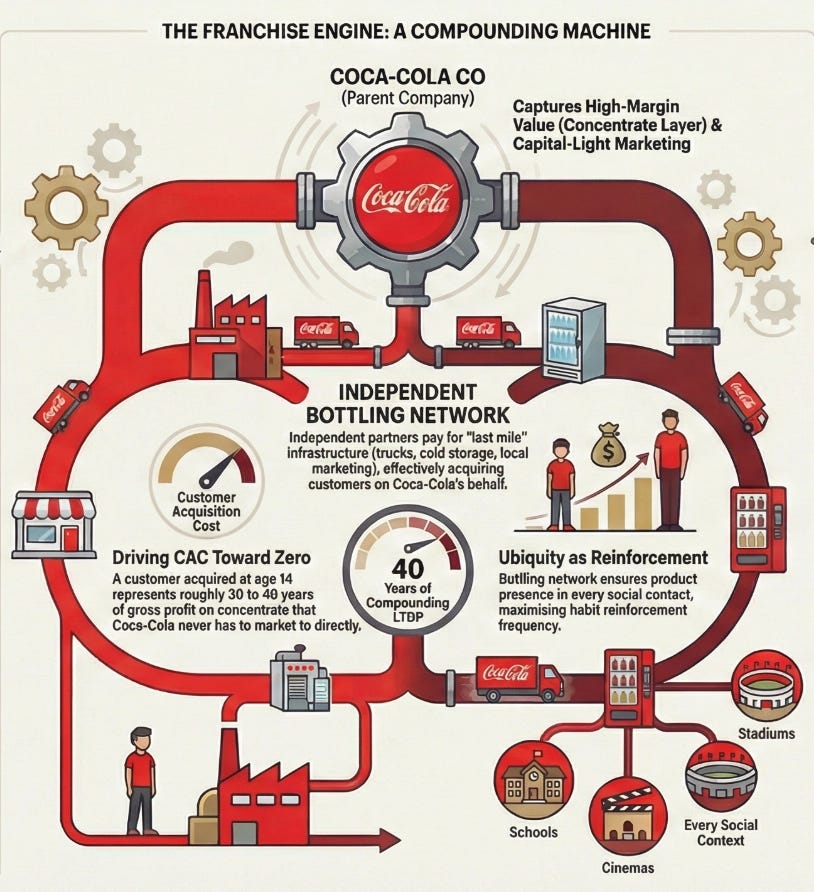

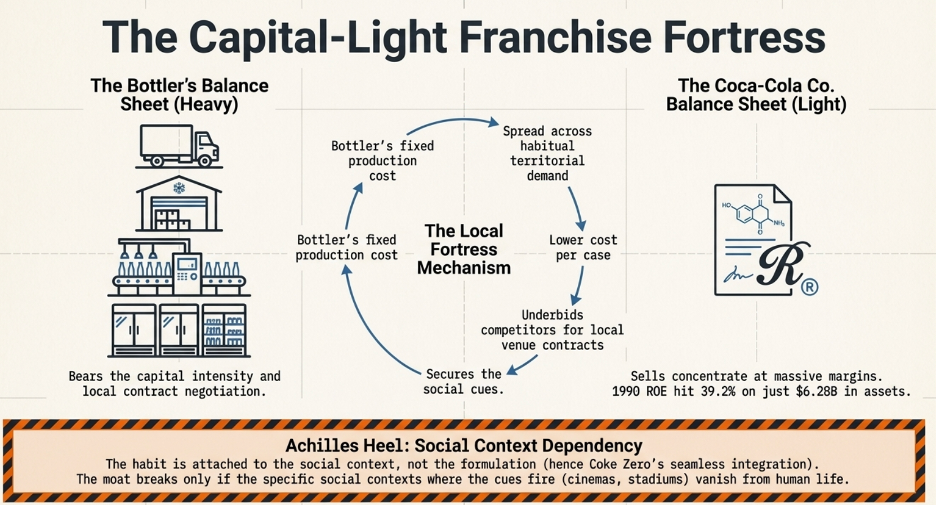

The Franchise Model: Getting Paid to Grow

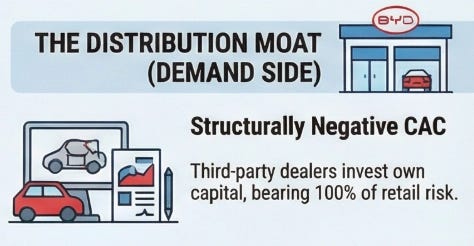

A conventional business acquires customers by spending money. Advertising budgets, salespeople, promotions, discounts — every new customer costs something to bring in. The franchise model does something structurally different: it shifts the entire cost of building and acquiring customers onto the franchisee.

When McDonald’s grants a franchise, the franchisee pays an upfront fee of roughly $45,000, commits to ongoing royalties of 4 to 5% of gross revenue, and then invests their own capital — typically $1 million to $2 million — to build the restaurant, hire and train staff, and develop the local customer base. They run local marketing. They handle all operations. They bear all the execution risk of whether the location performs.

From McDonald’s corporate perspective, every new franchise location that opens represents a new pool of customers being built and served at zero cost to McDonald’s. The franchisee did the work. The franchisee took the risk. And McDonald’s collects a royalty on every dollar of revenue those efforts generate for as long as the location operates.

The CAC for McDonald’s is therefore not just low — it is negative. McDonald’s is paid, upfront, by the very operators who are building its customer base on its behalf. In LTGP:CAC terms, the denominator approaches zero and the ratio becomes effectively infinite: there is no acquisition cost to divide by because the acquisition process itself generates income.

The operating leverage on top of this is equally extreme. McDonald’s corporate revenues — royalties, franchise fees, and lease income — scale with the size of the network and same-store sales performance. Its fixed cost base, however, does not scale with those revenues in any meaningful way. Food costs, kitchen labour, utilities — all of it sits with the franchisee. McDonald’s adds revenue with each new restaurant and each percentage point of same-store sales growth without bearing any of the variable costs of serving the customers those restaurants serve. The margin expansion from each additional dollar of network revenue is as close to pure profit as any business model produces.

The Insurance Float: Negative CAC With a Second Income Stream

Insurance extends the negative-CAC concept one step further by adding a mechanism that has no direct equivalent in any other business model: the float.

When a policyholder pays a premium, they are paying today for protection against a future event that may or may not occur — a car accident, a fire, a medical procedure. The insurer collects that premium now and pays claims later, sometimes months later, sometimes years. In the intervening period, the premium sits with the insurer as investable capital. This pool of collected premiums not yet paid out as claims is the float.

The float is not the insurer’s money permanently. It will eventually leave as claims. But in aggregate, for a large insurer writing billions of dollars of new premiums each year, the float is effectively permanent: new premiums constantly replenish what paying claims removes, and the total pool stays large and grows as the book of business grows. Berkshire Hathaway’s insurance operations have carried a float that has grown from roughly $2 billion in 1985 to over $160 billion today. That capital did not cost Berkshire a dividend payment or an interest charge. It was provided by policyholders who paid premiums to be insured.

Warren Buffett described this property early and precisely: the float is an interest-free loan from policyholders that Berkshire can invest in equities and businesses for as long as the insurance operation remains viable. The policyholders funded Berkshire’s investment portfolio. Berkshire earned the returns.

The CAC dimension is what makes the insurance model structurally remarkable. The policyholder pays the insurer to acquire and maintain their own business relationship. Every renewal premium is the customer funding the continuation of the relationship rather than the insurer spending to retain them. In a well-run book with strong customer retention, the insurer’s marginal acquisition cost is genuinely negative: the renewal premium exceeds the administrative cost of maintaining the policy, meaning the existing customer is generating net positive cash flow simply by staying.

The combined ratio determines whether the underwriting operation is adding to this or subtracting from it. The combined ratio is claims paid plus operating expenses, divided by premiums earned. A combined ratio below 100% means the insurer collects more in premiums than it pays out in claims and expenses — the underwriting itself is profitable, independent of any investment return on the float. A combined ratio of 95% means that for every $100 of premium collected, $95 covers claims and costs, and $5 is pure underwriting profit. That $5 of underwriting profit sits on top of whatever return the insurer earns by investing the float in the meantime.

When both are running simultaneously — a sub-100% combined ratio producing underwriting profit, and a large and growing float invested at attractive returns — the economics are extraordinary. The policyholder is paying you to hold their money. You are earning an investment return on that money. And the underwriting operation is generating profit on top of the investment return. In LTGP:CAC terms, there is no CAC: the customer paid to be acquired. There is a large and growing asset base — the float — generating returns that belong entirely to the insurer. And there is underwriting profit on top of that. This is what allowed Berkshire to compound its equity at rates that seemed implausible for its size over multiple decades. The policyholders financed the engine. Berkshire ran it.

Bringing It Back to the CRR

The franchise and insurance models are not exceptions to the operating leverage framework. They are its most powerful expressions.

In each case, the expensive, difficult work — building the brand and operating system, creating the intellectual property, developing underwriting expertise — is done once. The revenue that flows from that foundation scales with the network, the licensing estate, or the float, none of which require proportional increases in the cost base. More importantly, in each case the cost of acquiring and serving customers is borne by someone other than the business itself: the franchisee or the policyholder.

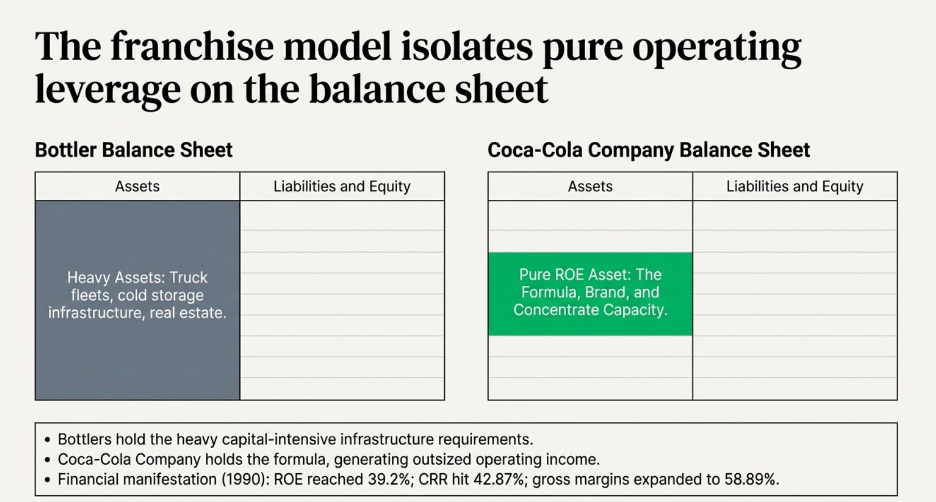

This is why the CRR in these businesses can sustain levels that conventional business models structurally cannot reach. When the earnings available for reinvestment are not being consumed by the cost of acquiring the customers who generated them, a larger share of each year’s income is available to compound the productive asset base. The CRR accelerates not because the business is being managed more efficiently, but because the architecture of the model has removed the largest drag on the reinvestment pool — growth cost — from the business’s own balance sheet and placed it on the participants in the ecosystem.

When you find a business with this structure, a genuine moat, and management aligned with long-term value creation, what you have found is a compounding engine where the fuel is provided by others and the returns accumulate entirely to the owner. The case studies that follow are each an example of exactly that.

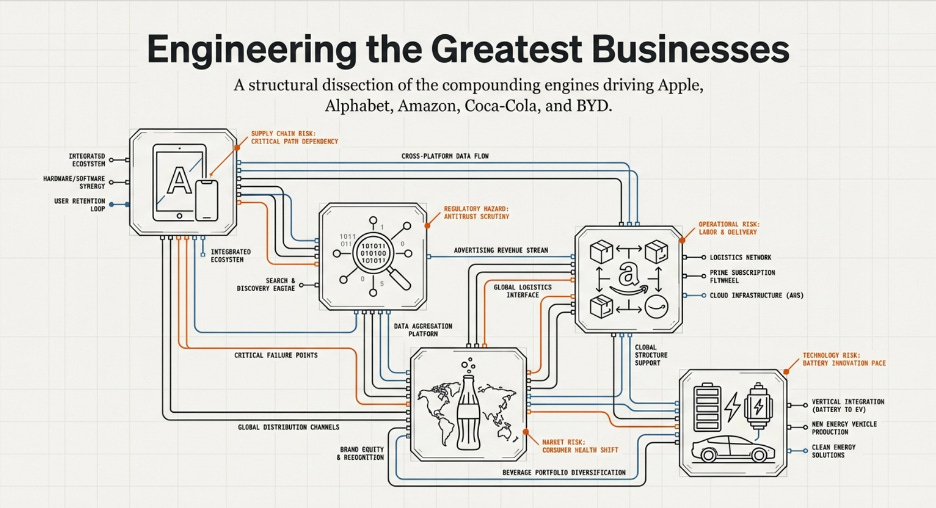

Part Four: The Companies

Apple — The Business That Gets Paid to Grow Itself

Who Is Really Acquiring Apple’s Customers?

Here is a question most people never ask about Apple: who is actually paying to bring in new customers?

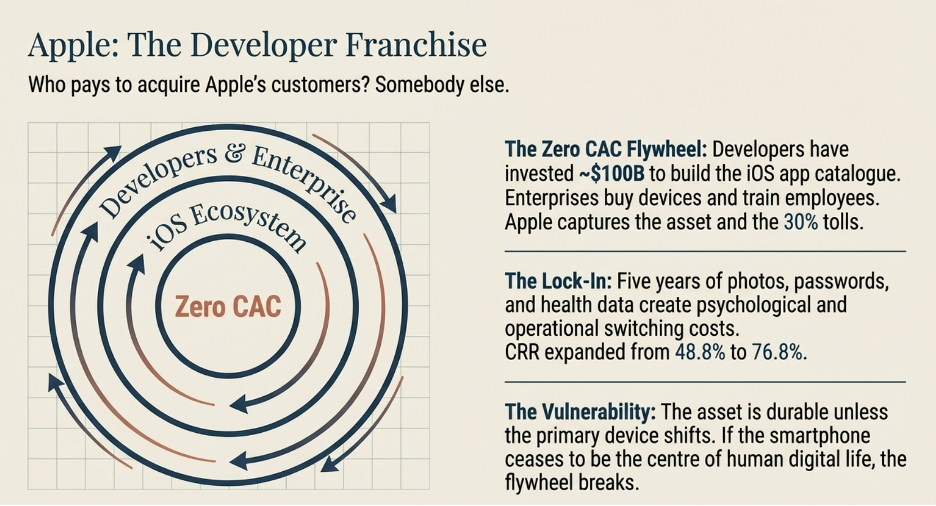

The obvious answer is Apple — through its marketing, its retail stores, its product launches. But the honest answer is mostly somebody else. Apple has built a system where outside parties spend their own money to grow Apple’s business, and Apple collects a toll on the result.

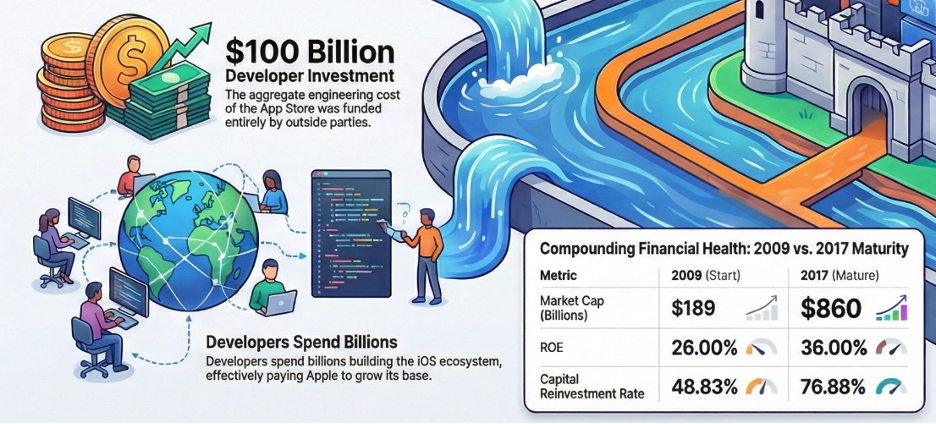

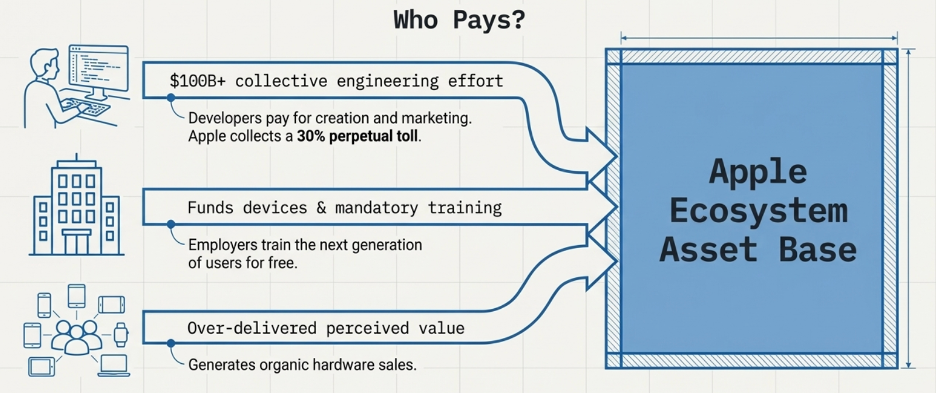

Start with app developers. When a developer builds an app for the iPhone, they are making a real financial bet. They hire engineers, spend months building the product, then run marketing campaigns to tell the world it exists. If the campaign works and someone downloads the app, one of two things happens. Either that person already owns an iPhone — in which case the app has deepened their attachment to the platform — or they do not own an iPhone yet, and the app just gave them a reason to buy one. Either way, Apple wins. The developer paid for everything. Apple collected 30% of all revenue the app generates, forever, and ended up with a platform that is now more valuable than it was before.

Apple did not pay to build that app. Apple did not pay to market it. The developer community has collectively invested roughly $100 billion in engineering effort building the iOS app catalogue. Apple contributed none of that capital. It received the asset.

Now think about what this means using the LTGP:CAC framework. CAC is what it costs to acquire a customer. LTGP is the total profit that customer generates over their lifetime. A ratio of 3:1 is a workable business. A ratio of 10:1 is exceptional. Apple’s ratio through the developer channel is not 10:1 — the CAC is effectively zero, because others are doing the acquiring and delivering the customers to Apple. When the cost of acquisition is zero, every dollar a customer generates over their lifetime is pure return on an acquisition cost that was never paid. When we add devices into the equation, they form a large initial fixed cost (e.g. press releases). But as LTGP is realized and grows over the period of time the customer spends in the ecosystem, the CAC becomes negligible. This demonstrates an example of the high-operating leverage business economics of Apple.

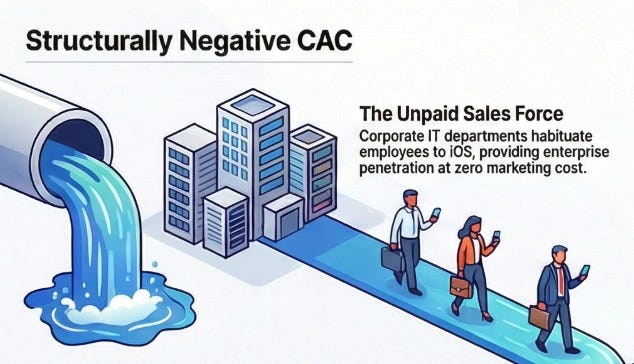

Companies contribute a second acquisition channel that rarely gets discussed. When a business buys iPhones for its employees — and millions of businesses around the world do — the company pays for the devices, sets them up, and trains its staff to use them. In doing so, it produces a generation of workers who spend years becoming fluent on iOS in a professional context and naturally carry that preference into their personal lives. Apple never sent a salesperson. The employer did the work for free

.An additional thing to add is “Customer evangelism”. Because the quality of the product was so strong and delivered value far beyond what the customer perceived they were paying for, advocacy and evangelism for the brand was strong. This resulted in sales of devices where Apple need not allocate additional marketing or advertising budget for. Looking from a first principles perspective, it also suggests that marketing principles are extremely important. In order to over deliver on perceived value, a business has to first understand deeply who the customers are, what problems they are specifically solving for, and how does it influence their specific demographic status. How it is solved for (logical or psychological solutions) is extremely important in creating perceived value as well. And hence, it is vital that the investor understands the economics of customer evangelism if a brand has one.

Why Leaving Becomes Almost Impossible

Every app a user accumulates on their iPhone makes them slightly harder to move to a competitor. Five years of photos organised in Apple’s system, messages in iMessage, notes in Apple Notes, saved passwords in iCloud Keychain, fitness data in Apple Health — none of this transfers cleanly to an Android phone. The cost of switching is not just the price of a new handset. It is mental capacity and time required to learn how to operate around the ecosystem and the realisation that five years of digital life has been built inside Apple’s walls, and most of it cannot be taken out.

Developers build this lock-in without intending to. Every app they publish adds another layer of personalisation that makes a user’s iPhone feel uniquely theirs. The more the phone feels like yours, the more leaving it feels like leaving part of yourself behind.

Apple then added Services on top. iCloud storage, Apple Music, Apple Pay, AppleCare — each subscription raises the monthly revenue Apple earns from a user who was already acquired years ago through someone else’s marketing spend. A user paying for three Apple services generates $25 to $30 per month in recurring revenue for Apple. That revenue carries almost no acquisition cost, because the acquiring happened long ago and was paid for by somebody else.

How the iOS Platform Turned Local Supply and Demand Into Compounding Scale

Before the App Store became a global phenomenon, it was a single platform serving one device. That specificity was not a limitation — it was the seed of the moat.

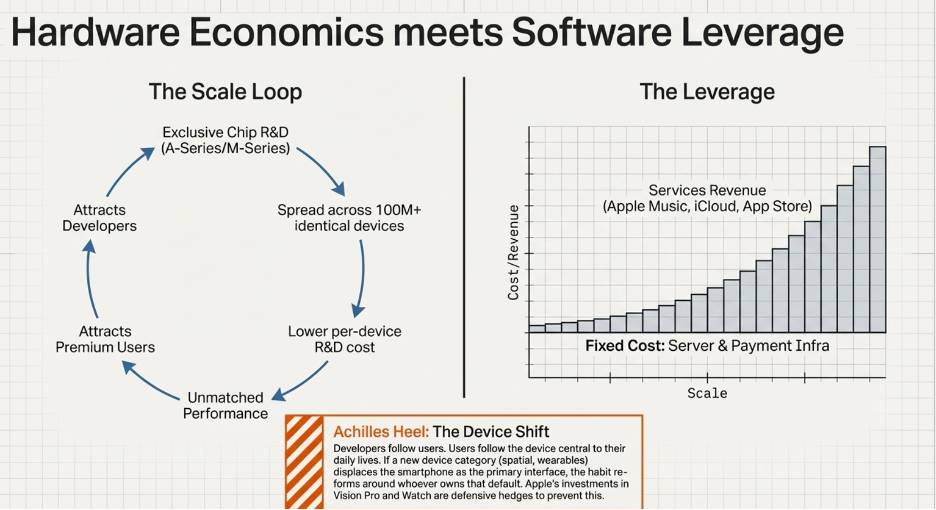

Apple’s supply advantage in the early smartphone market was chip and software integration: devices that performed materially better than Android alternatives at the same price tier, because the chip was designed for exactly one device running exactly one operating system. This drew a specific user: the premium buyer — high-spending, willing to pay for digital products, credit card on file.

That specific user concentration is what triggered the demand-side dynamic. Developers face a simple economic question when deciding where to build first: where are the users who actually pay? In the early smartphone era, the answer was unambiguous — iOS. Developers invested their own capital into that ecosystem, which enriched the app catalogue, which deepened switching costs, which attracted more premium buyers.

Now the economies of scale crystallise. Apple’s chip R&D budget — billions per year — is spread across hundreds of millions of identically-configured devices running one operating system. Every additional iPhone sold reduces the effective per-device R&D cost. A competitor designing chips for a fragmented Android ecosystem cannot achieve this: their R&D must cover dozens of device configurations across multiple price points. Their fixed R&D cost per premium device is structurally higher because the premium device is one configuration among many, rather than the sole focus of the engineering investment.

This is the precise mechanism. The supply advantage (better chip economics through focused R&D) drew the premium users into a specific platform. The demand advantage (developer investment, switching costs) trapped them there. The concentration of those users in one ecosystem spread Apple’s fixed costs over a growing base, which improved chip economics, which maintained the supply advantage, which sustained the user concentration. The loop reinforces itself. A competitor must replicate not just the chip or the app store, but both simultaneously in the same specific market segment — and must do so starting from zero volume while Apple’s per-device fixed costs are already lower than anything they can achieve.

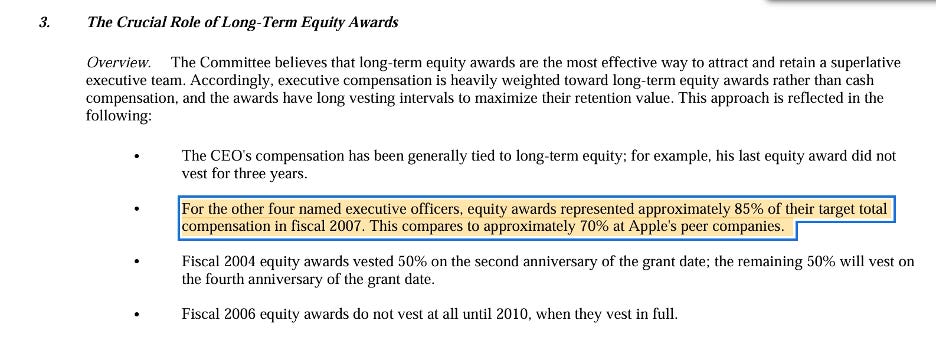

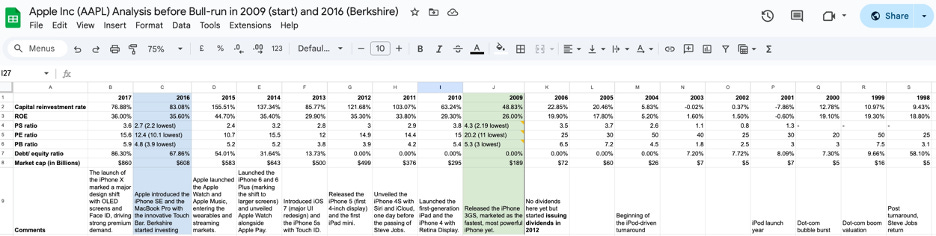

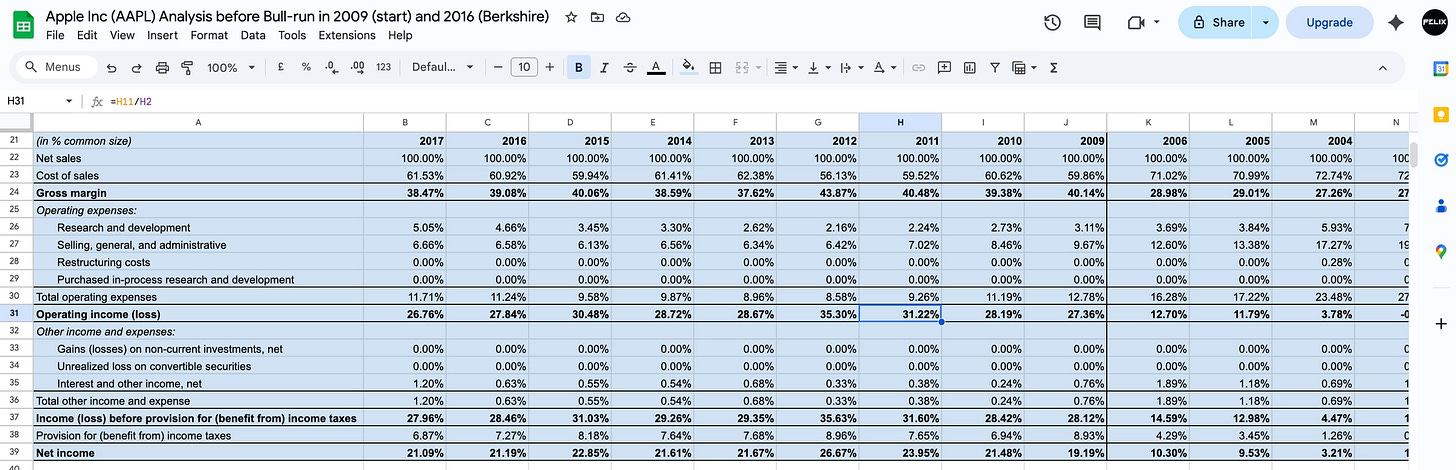

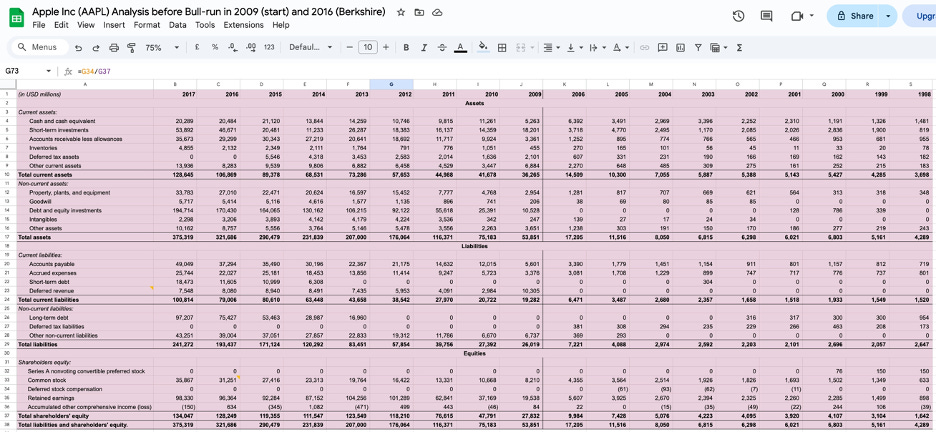

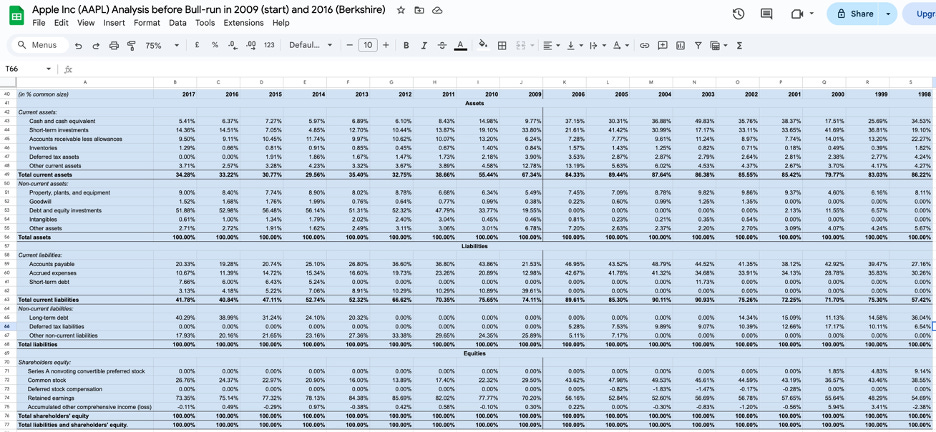

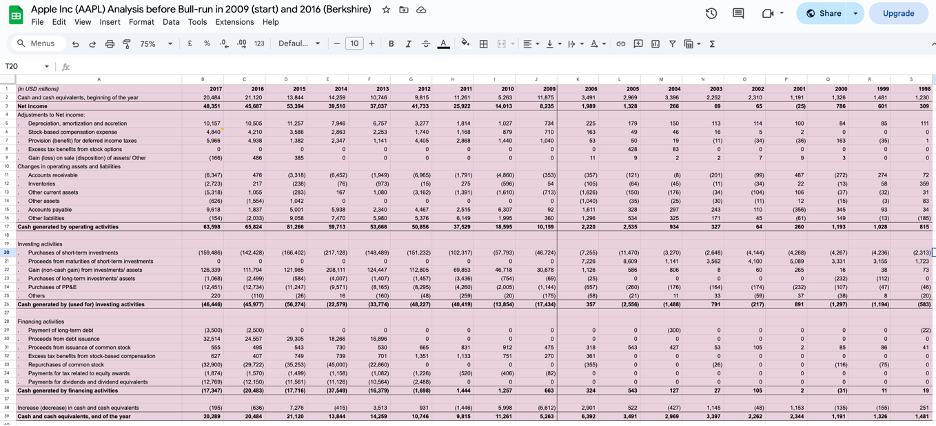

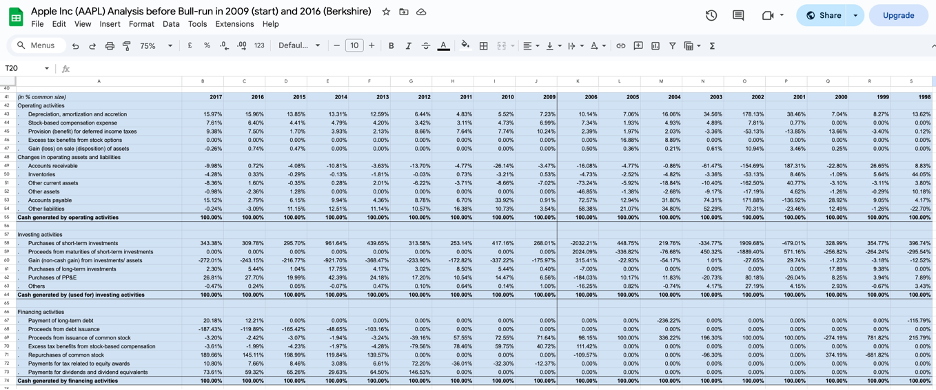

The financial result is visible in the compounding. Between 2009 and 2017, Apple’s Cash Reinvestment Rate expanded from 48.83% to 76.88%. Return on equity grew from 26% to 36%. Market capitalisation went from $189 billion to $860 billion. These are not the numbers of a company that launched a popular product. They are the numbers of a platform where the fixed cost of excellence is being spread across a user base that is structurally prevented from leaving.

What Creates the Operating Leverage

Apple’s operating leverage comes from a simple structural fact: the App Store and the Services layer were built once, and the cost of running them does not meaningfully grow each time a new user joins.

Apple spent billions building the App Store infrastructure — the servers, the payment processing systems, the app review process, the developer tools. That cost was incurred once. Today, whether Apple processes 10 million transactions through the App Store or 10 billion, the fixed cost base of that infrastructure is largely the same. Every additional transaction flows through at an extremely high incremental margin because the infrastructure it requires is already paid for.

The same logic applies to iCloud, Apple Music, and Apple Pay. The servers exist. The engineering teams that maintain them are already employed. Adding one more subscriber costs Apple almost nothing relative to the revenue that subscriber generates. As the installed base of iPhone users grows and an increasing share of them subscribe to multiple services, revenue scales continuously while the cost base that supports it grows far more slowly. This is why Apple’s operating income has grown dramatically faster than its revenue over time — the leverage structure of the Services business ensures that as scale increases, each additional dollar of revenue converts to profit at a higher rate than the dollar before it.

What Keeps a Competitor Out

To understand why Apple’s position is structurally defensible, imagine a serious, well-funded competitor deciding to build a competing smartphone platform from scratch today.

The first problem they face is the app catalogue. The iOS App Store has over two million applications built by developers who chose iOS because that is where the paying customers are. To attract those developers to a new platform, the competitor needs to demonstrate a large, high-spending user base. But to attract a large user base, they need a compelling app catalogue. They cannot have the user base without the apps, and they cannot have the apps without the user base. This is the two-sided network trap — both sides of the marketplace need the other to already exist, and neither will arrive first.

The second problem is switching cost. Even if the competitor builds a compelling device and attracts some developers, they are asking consumers to abandon years of accumulated digital life. The consumer bears a real personal cost to switch that the competitor’s product has to overcome before it can be evaluated on its own merits.

The third problem is Apple Silicon. Apple designs its own chips, which means the performance and efficiency of Apple hardware is directly co-optimised with the software running on it in a way that a competitor using third-party chips simply cannot match. Qualcomm or MediaTek produce chips that must perform reasonably well across dozens of different manufacturers’ devices. Apple designs a chip specifically for one device running one operating system. The result is a performance advantage that compounds with every chip generation.

Why the Asset Is Durable

Apple’s chip design capability is highly durable across paradigm shifts for a straightforward reason: the capability is the thing, not the specific application of it. The same team infrastructure and process that designed the A-series chip for phones designed the M-series chip for laptops. Whatever computing form factor comes next — wearables, spatial computing, or something not yet named — the capability to design a chip deeply co-optimised with the software running on it travels with Apple into that new paradigm. In other words, as Li Lu mentions “the only constant in business is change”, and the ability for a company to handle change consistently well is the Ultimate Holy Grail.

The App Store ecosystem is conditionally durable — and the condition is simple: developers go where the users are.

When a developer decides where to build their app, they are making a business decision. They want to reach the largest possible number of paying customers with the least friction. In 2008, the answer was obvious: build for iPhone. That is where the users were. So developers built for iPhone, which made iPhone more valuable, which attracted more users, which attracted more developers. The flywheel spun.

But here is the vulnerability hiding inside that logic. Developers do not follow Apple. They follow users. And users follow the device they use most in their daily life. In the 2000s, that device was the phone. The phone became the center of people’s digital lives — the thing they reached for first thing in the morning and last thing at night. Whoever owned the dominant phone owned the relationship with the user.

Now ask a different question: what if the phone stops being that device?

Not because phones disappear — they won’t — but because something new becomes the primary way people interact with technology. Maybe it is a pair of glasses that overlays information onto the physical world. Maybe it is a watch that handles most of what a phone once did. Maybe it is something nobody has invented yet. Whatever it is, if people start spending more time on that device than on their phone, developers will start building for that device first. And whoever owns the dominant version of that device will own the user relationship Apple currently owns through the iPhone.

This is Apple’s Achilles heel. The flywheel — developers funding the ecosystem, users getting locked in through accumulated data and apps — only works as long as the iPhone is the device people’s digital lives are built around. The moment a new device displaces the phone as the center of daily life, Apple’s flywheel breaks unless Apple is the one who built that new device first.

This is why Apple invests in Vision Pro, Apple Watch, and AirPods — not because these products are currently as important as the iPhone, but because Apple is deliberately planting flags. They are seeding early user bases in each new device category so that if one of them becomes the next center of digital life, Apple is already there with users, which means developers will follow, and the flywheel spins up again. If a competitor builds that device first and reaches the scale where developers flock to it, Apple would find itself on the outside of its own trap — needing the users to attract the developers, and needing the developers to attract the users, with neither in hand.

Alphabet — One Engine, Three Fuel Lines

Start Here: The Engine Everything Feeds

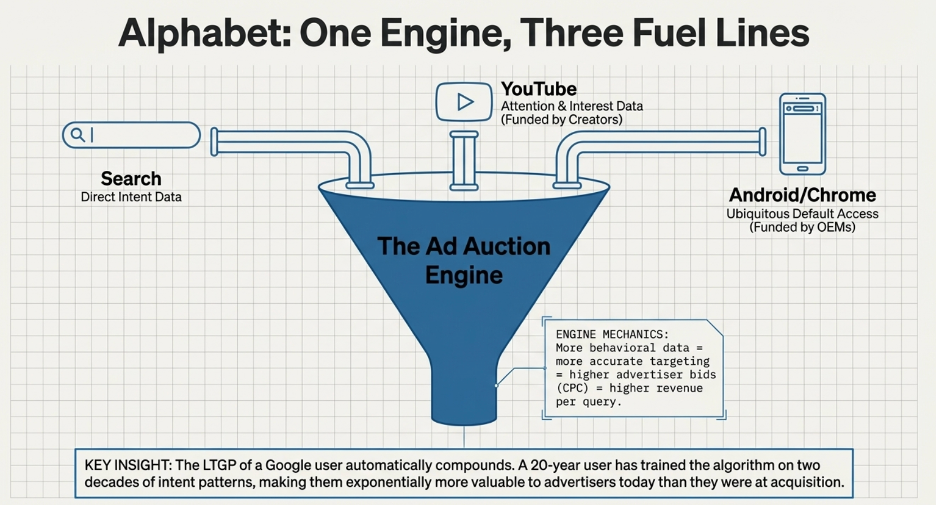

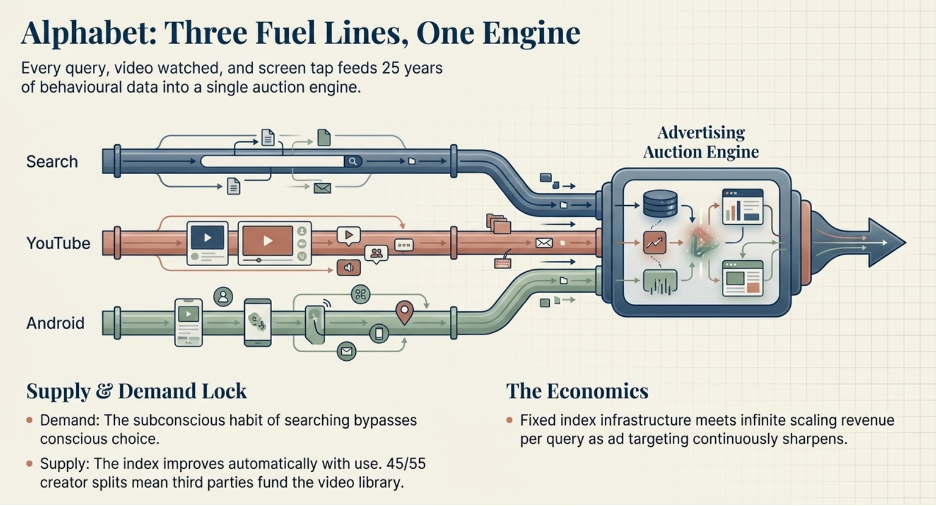

To understand how Google makes money, you need to understand one central mechanism first: the advertising auction.

When you search “best running shoes,” Google does not simply show you a list of shoe websites. It runs an instant auction among every shoe advertiser who wants to reach someone searching that exact phrase. Each advertiser bids for placement. The winner pays per click. Google collects that payment.